This is the multi-page printable view of this section.

Click here to print.

Return to the regular view of this page.

Amazon EKS Anywhere

EKS Anywhere documentation homepage

EKS Anywhere is container management software built by AWS that makes it easier to run and manage Kubernetes clusters on-premises and at the edge. EKS Anywhere is built on EKS Distro

, which is the same reliable and secure Kubernetes distribution used by Amazon Elastic Kubernetes Service (EKS)

in AWS Cloud. EKS Anywhere simplifies Kubernetes cluster management through the automation of undifferentiated heavy lifting such as infrastructure setup and Kubernetes cluster lifecycle operations.

Unlike Amazon EKS in AWS Cloud, EKS Anywhere is a user-managed product that runs on user-managed infrastructure. You are responsible for cluster lifecycle operations and maintenance of your EKS Anywhere clusters.

If you have on-premises or edge environments with reliable connectivity to an AWS Region, consider using EKS Hybrid Nodes

or EKS on Outposts

to benefit from AWS-managed EKS control planes and a consistent experience with EKS in the AWS Cloud.

The tenets of the EKS Anywhere project are:

- Simple: Make using a Kubernetes distribution simple and boring (reliable and secure).

- Opinionated Modularity: Provide opinionated defaults about the best components to include with Kubernetes, but give customers the ability to swap them out

- Open: Provide open source tooling backed, validated and maintained by Amazon

- Ubiquitous: Enable customers and partners to integrate a Kubernetes distribution in the most common tooling.

- Stand Alone: Provided for use anywhere without AWS dependencies

- Better with AWS: Enable AWS customers to easily adopt additional AWS services

1 - Overview

What is EKS Anywhere?

EKS Anywhere is container management software built by AWS that makes it easier to run and manage Kubernetes clusters on-premises and at the edge. EKS Anywhere is built on EKS Distro

, which is the same reliable and secure Kubernetes distribution used by Amazon Elastic Kubernetes Service (EKS)

in AWS Cloud. EKS Anywhere simplifies Kubernetes cluster management through the automation of undifferentiated heavy lifting such as infrastructure setup and Kubernetes cluster lifecycle operations.

Unlike Amazon EKS in AWS Cloud, EKS Anywhere is a user-managed product that runs on user-managed infrastructure. You are responsible for cluster lifecycle operations and maintenance of your EKS Anywhere clusters. EKS Anywhere is open source and free to use at no cost. To receive support for your EKS Anywhere clusters, you can optionally purchase EKS Anywhere Enterprise Subscriptions

for 24/7 support from AWS subject matter experts and access to EKS Anywhere Curated Packages

. EKS Anywhere Curated Packages are software packages that are built, tested, and supported by AWS and extend the core functionalities of Kubernetes on your EKS Anywhere clusters.

EKS Anywhere supports many different types of infrastructure including VMWare vSphere, Bare Metal, Nutanix, Apache CloudStack, and AWS Snow. You can run EKS Anywhere without a connection to AWS Cloud and in air-gapped environments, or you can optionally connect to AWS Cloud to integrate with other AWS services. You can use the EKS Connector

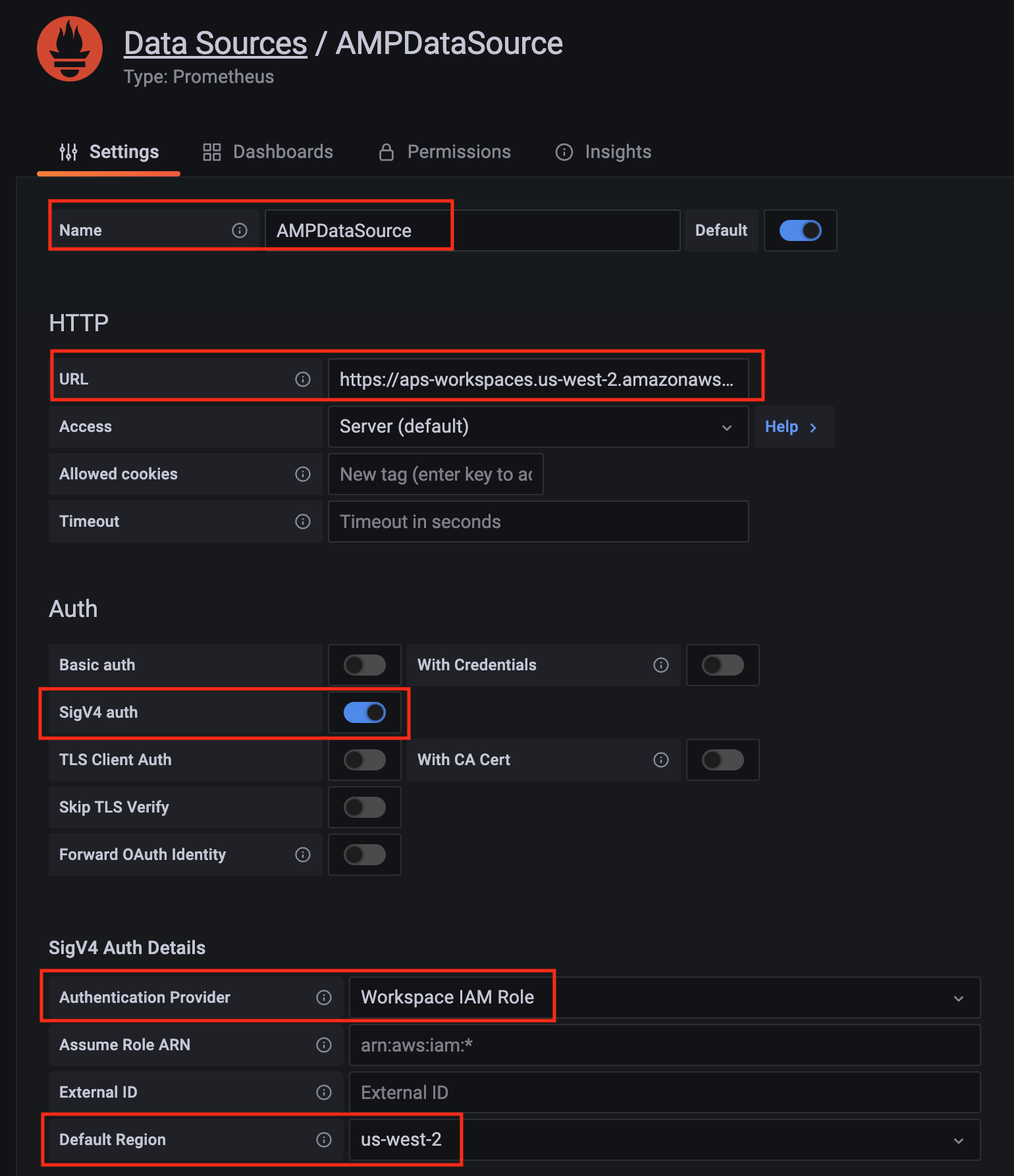

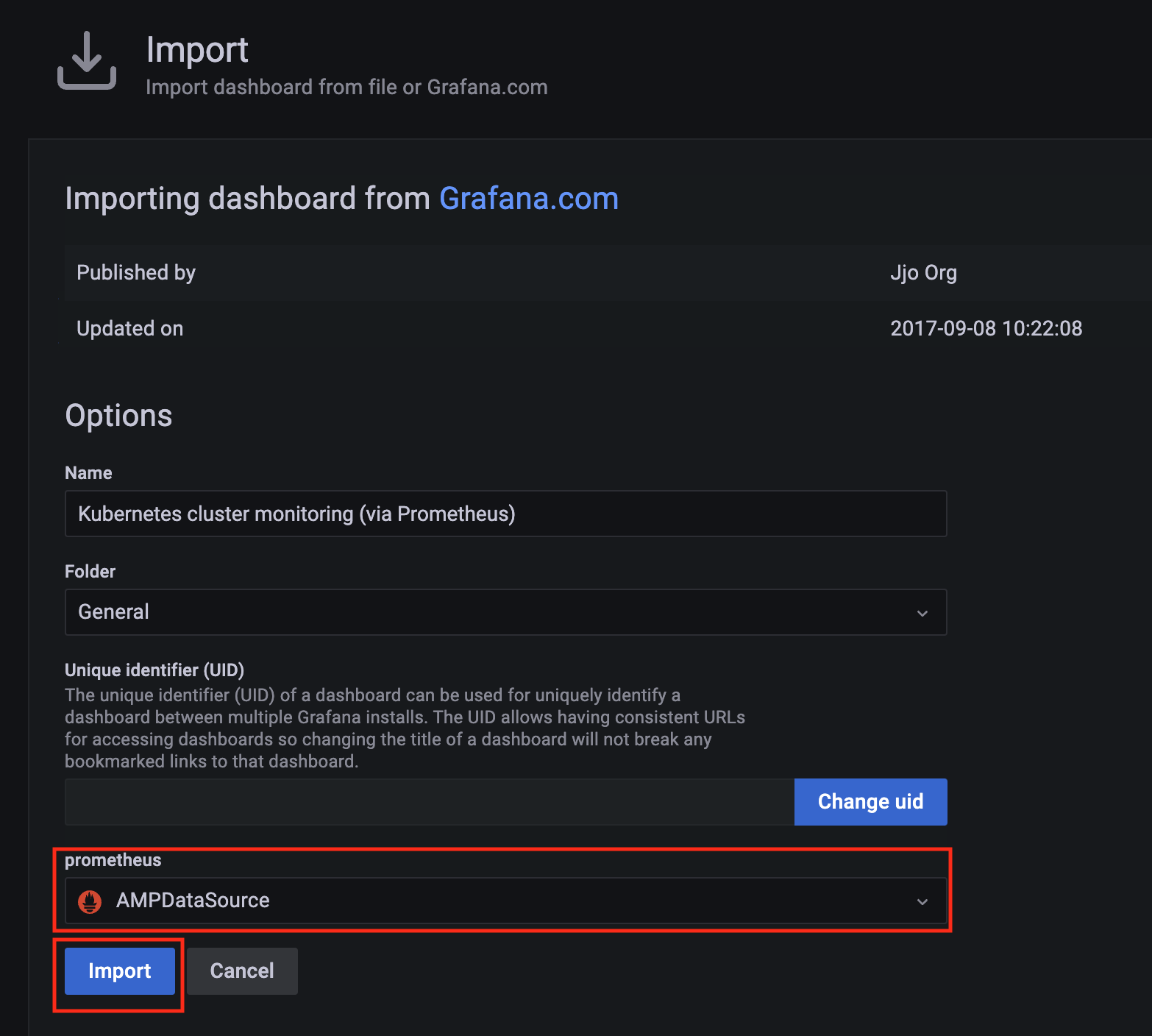

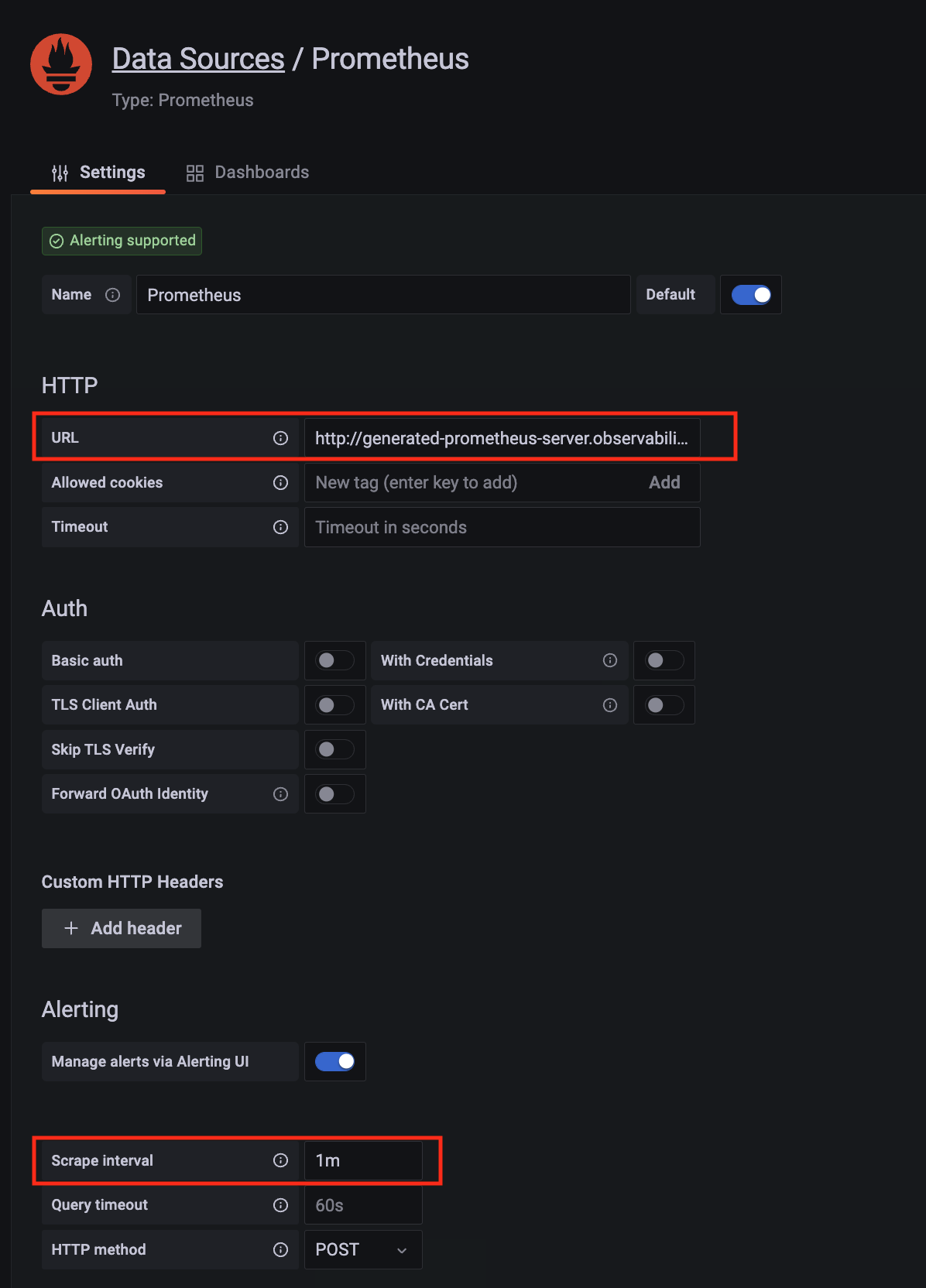

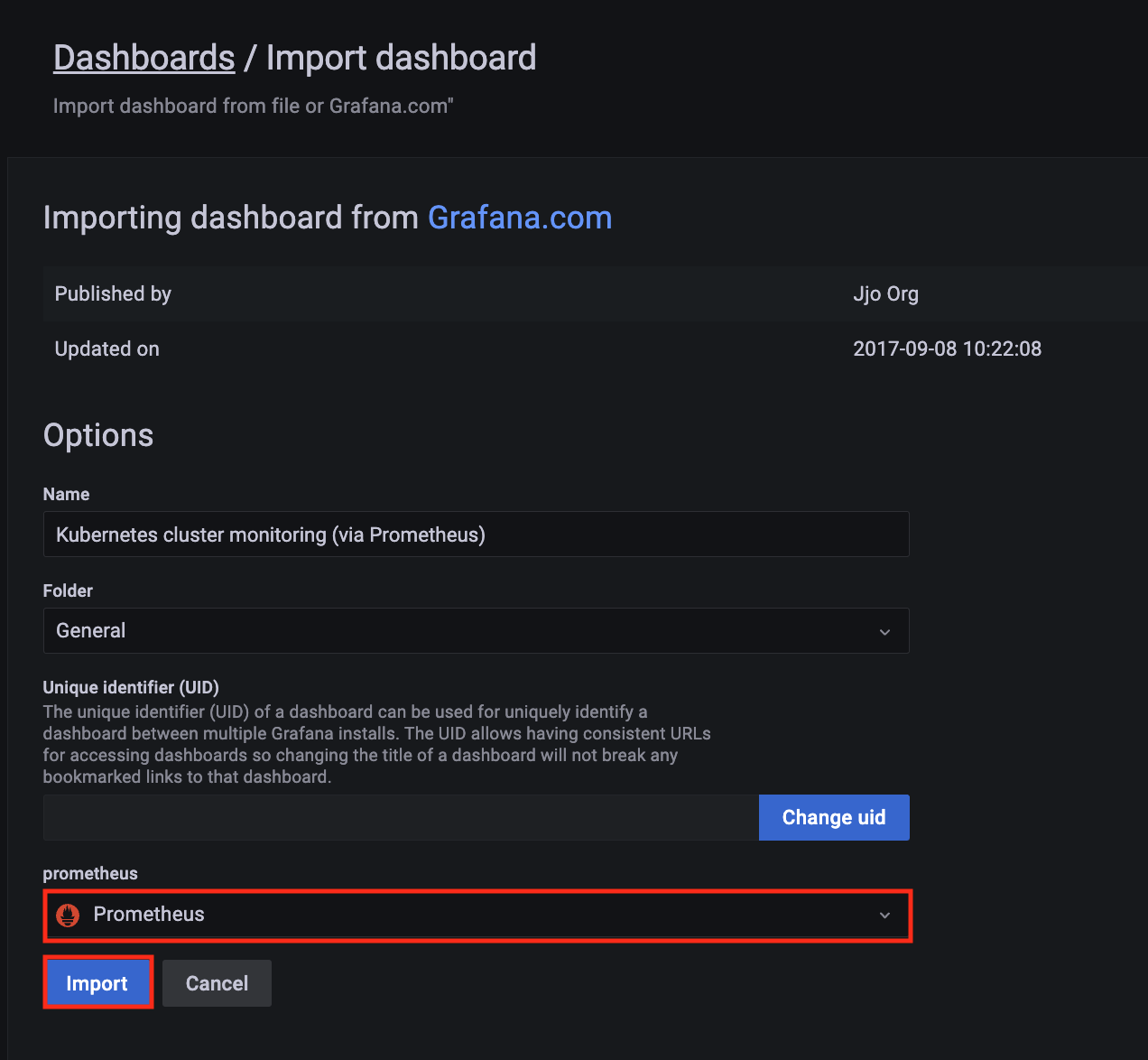

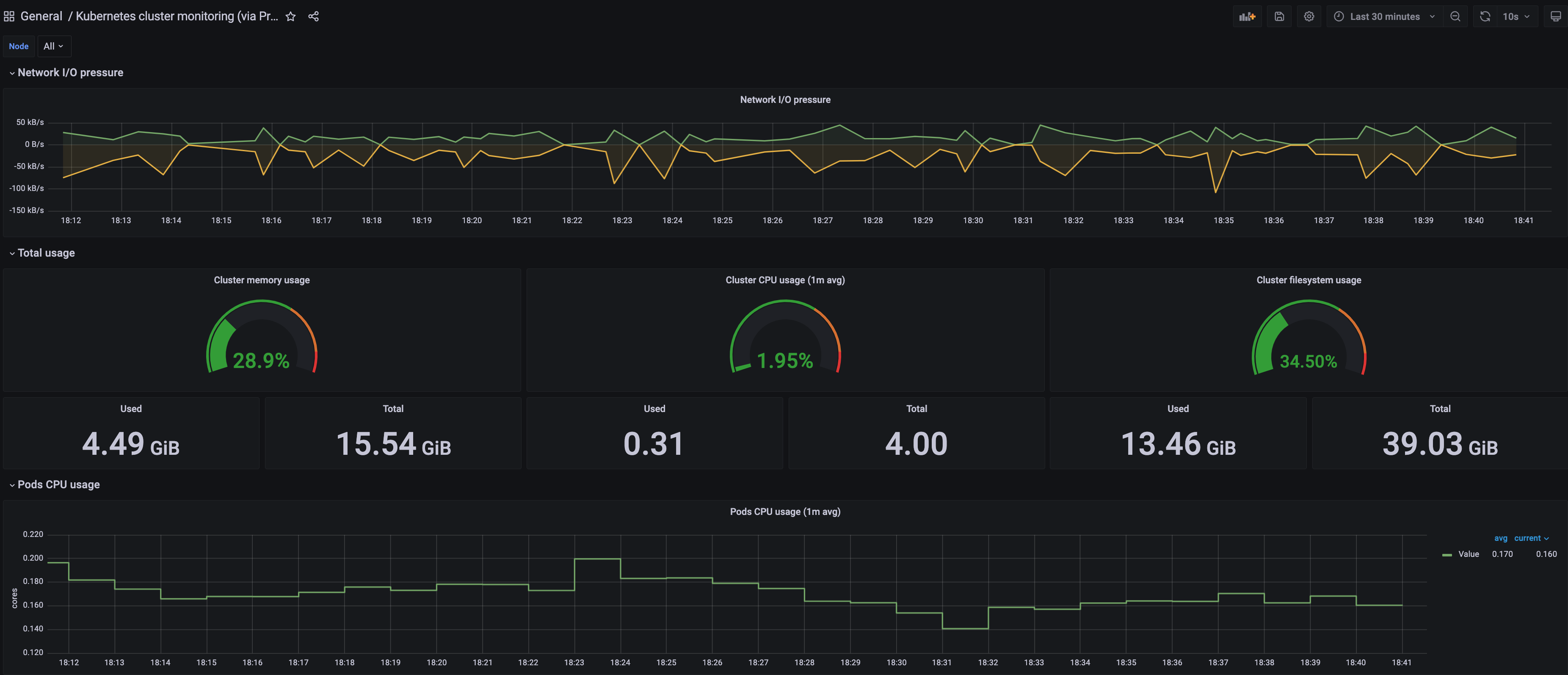

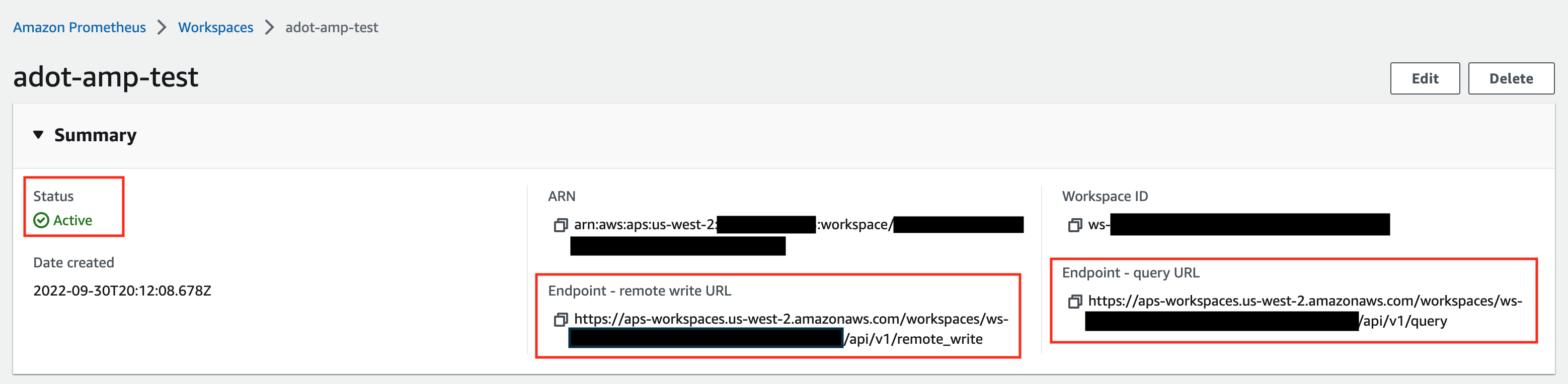

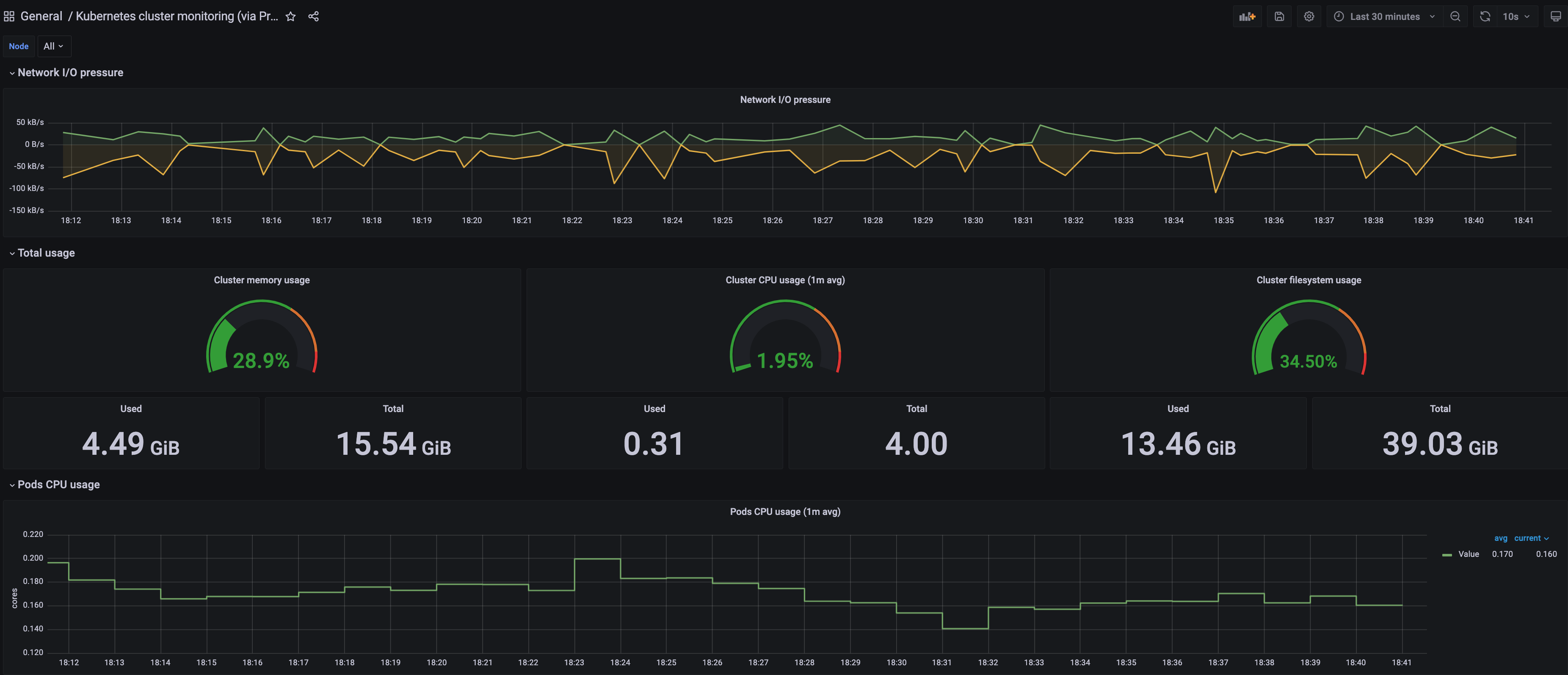

to view your EKS Anywhere clusters in the Amazon EKS console, AWS IAM to authenticate to your EKS Anywhere clusters, IAM Roles for Service Accounts (IRSA) to authenticate Pods with other AWS services, and AWS Distro for OpenTelemetry to send metrics to Amazon Managed Prometheus for monitoring cluster resources.

If you have on-premises or edge environments with reliable connectivity to an AWS Region, consider using EKS Hybrid Nodes

or EKS on Outposts

to benefit from the AWS-managed EKS control plane and consistent experience with EKS in AWS Cloud.

EKS Anywhere is built on the Kubernetes sub-project called Cluster API

(CAPI), which is focused on providing declarative APIs and tooling to simplify the provisioning, upgrading, and operating of multiple Kubernetes clusters. While EKS Anywhere simplifies and abstracts the CAPI primitives, it is useful to understand the basics of CAPI when using EKS Anywhere.

Why EKS Anywhere?

- Simplify and automate Kubernetes management on-premises

- Unify Kubernetes distribution and support across on-premises, edge, and cloud environments

- Adopt modern operational practices and tools on-premises

- Build on open source standards

Common Use Cases

- Modernize on-premises applications from virtual machines to containers

- Internal development platforms to standardize how teams consume Kubernetes across the organization

- Telco 5G Radio Access Networks (RAN) and Core workloads

- Regulated services in private data centers on-premises

What’s Next?

1.1 - Frequently Asked Questions

Frequently asked questions about EKS Anywhere

AuthN / AuthZ

How do my applications running on EKS Anywhere authenticate with AWS services using IAM credentials?

You can now leverage the IAM Role for Service Account (IRSA)

feature

by following the IRSA reference

guide for details.

Does EKS Anywhere support OIDC (including Azure AD and AD FS)?

Yes, EKS Anywhere can create clusters that support API server OIDC authentication.

This means you can federate authentication through AD FS locally or through Azure AD, along with other IDPs that support the OIDC standard.

In order to add OIDC support to your EKS Anywhere clusters, you need to configure your cluster by updating the configuration file before creating the cluster.

Please see the OIDC reference

for details.

Does EKS Anywhere support LDAP?

EKS Anywhere does not support LDAP out of the box.

However, you can look into the Dex LDAP Connector

.

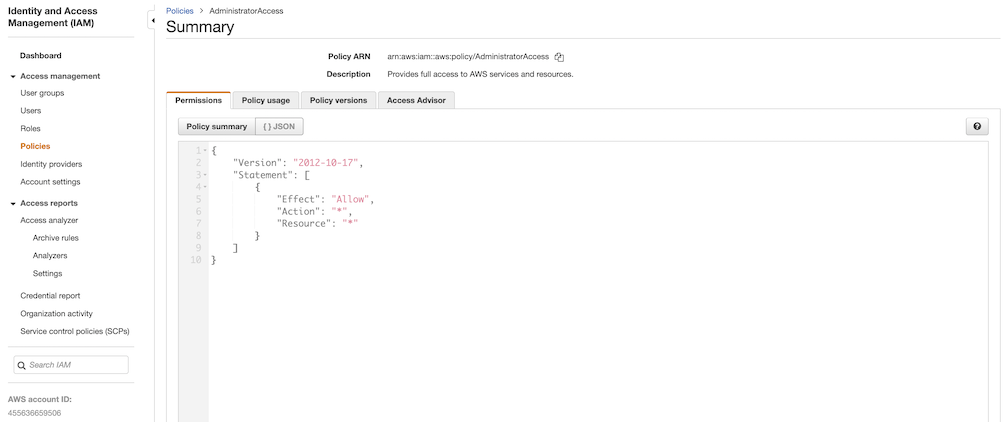

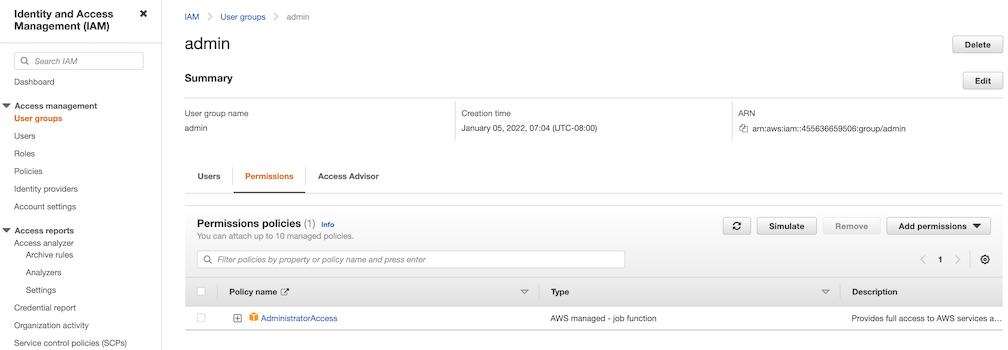

Can I use AWS IAM for Kubernetes resource access control on EKS Anywhere?

Yes, you can install the aws-iam-authenticator

on your EKS Anywhere cluster to achieve this.

Miscellaneous

How much does EKS Anywhere cost?

EKS Anywhere is free, open source software that you can download, install on your existing hardware, and run in your own data centers.

It includes management and CLI tooling for all supported cluster topologies

on all supported providers

.

You are responsible for providing infrastructure where EKS Anywhere runs (e.g. VMware, bare metal), and some providers require third party hardware and software contracts.

The EKS Anywhere Enterprise Subscription

provides access to curated packages and enterprise support.

This is an optional—but recommended—cost based on how many clusters and how many years of support you need.

Can I connect my EKS Anywhere cluster to EKS?

Yes, you can install EKS Connector to connect your EKS Anywhere cluster to AWS EKS.

EKS Connector is a software agent that you can install on the EKS Anywhere cluster that enables the cluster to communicate back to AWS.

Once connected, you can immediately see a read-only view of the EKS Anywhere cluster with workload and cluster configuration information on the EKS console, alongside your EKS clusters.

How does the EKS Connector authenticate with AWS?

During start-up, the EKS Connector generates and stores an RSA key-pair as Kubernetes secrets.

It also registers with AWS using the public key and the activation details from the cluster registration configuration file.

The EKS Connector needs AWS credentials to receive commands from AWS and to send the response back.

Whenever it requires AWS credentials, it uses its private key to sign the request and invokes AWS APIs to request the credentials.

How does the EKS Connector authenticate with my Kubernetes cluster?

The EKS Connector acts as a proxy and forwards the EKS console requests to the Kubernetes API server on your cluster.

In the initial release, the connector uses impersonation

with its service account secrets to interact with the API server.

Therefore, you need to associate the connector’s service account with a ClusterRole,

which gives permission to impersonate AWS IAM entities.

How do I enable an AWS user account to view my connected cluster through the EKS console?

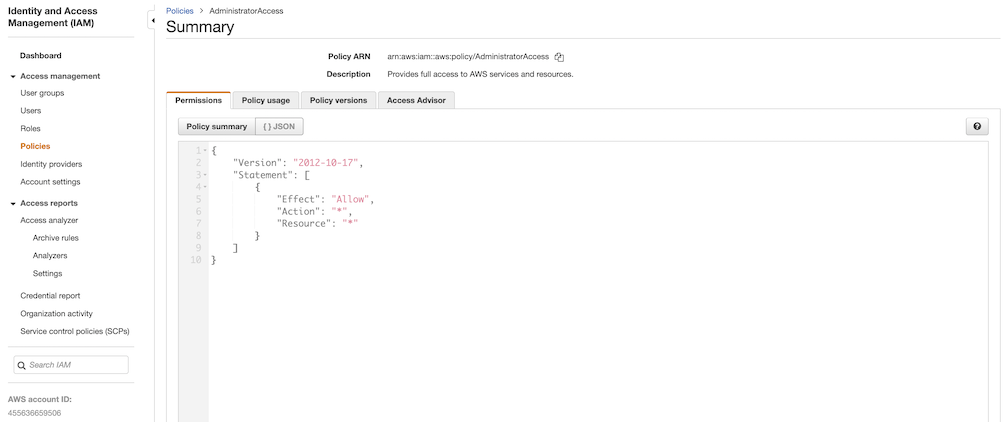

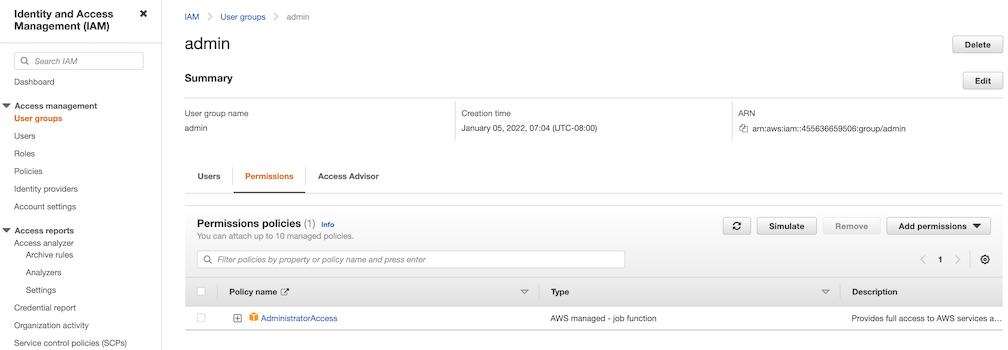

For each AWS user or other IAM identity, you should add cluster role binding to the Kubernetes cluster with the appropriate permission for that IAM identity.

Additionally, each of these IAM entities should be associated with the IAM policy

to invoke the EKS Connector on the cluster.

Can I use Amazon Controllers for Kubernetes (ACK) on EKS Anywhere?

Yes, you can leverage AWS services from your EKS Anywhere clusters on-premises through Amazon Controllers for Kubernetes (ACK)

.

Can I deploy EKS Anywhere on other clouds?

EKS Anywhere can be installed on any infrastructure with the required Bare Metal, Cloudstack, or VMware vSphere components.

See EKS Anywhere Baremetal

, CloudStack

, or vSphere

documentation.

How is EKS Anywhere different from ECS Anywhere?

Amazon ECS Anywhere

is an option for Amazon Elastic Container Service (ECS)

to run containers on your on-premises infrastructure.

The ECS Anywhere Control Plane runs in an AWS region and allows you to install the ECS agent on worker nodes that run outside of an AWS region.

Workloads that run on ECS Anywhere nodes are scheduled by ECS.

You are not responsible for running, managing, or upgrading the ECS Control Plane.

EKS Anywhere runs the Kubernetes Control Plane and worker nodes on your infrastructure.

You are responsible for managing the EKS Anywhere Control Plane and worker nodes.

There is no requirement to have an AWS account to run EKS Anywhere.

If you’d like to see how EKS Anywhere compares to EKS please see the information here.

How can I manage EKS Anywhere at scale?

You can perform cluster life cycle and configuration management at scale through GitOps-based tools.

EKS Anywhere offers git-driven cluster management through the integrated Flux Controller.

See Manage cluster with GitOps

documentation for details.

Can I run EKS Anywhere on ESXi?

No. EKS Anywhere is only supported on providers listed on the EKS Anywhere providers

page.

There would need to be a change to the upstream project to support ESXi.

Can I deploy EKS Anywhere on a single node?

Yes. Single node cluster deployment is supported for Bare Metal. See workerNodeGroupConfigurations

1.2 - Partners

EKS Anywhere validated partners

Amazon EKS Anywhere maintains relationships with third-party vendors to provide add-on solutions for EKS Anywhere clusters.

A complete list of these partners is maintained on the Amazon EKS Anywhere Partners

page.

See Conformitron: Validate third-party software with Amazon EKS and Amazon EKS Anywhere

for information on how conformance testing and quality assurance is done on this software.

The following shows validated EKS Anywhere partners whose products have passed conformance test for specific EKS Anywhere providers and versions:

Kubernetes Version : 1.27

Date of Conformance Test : 2024-05-02

Following ISV Partners have Validated their Conformance :

VENDOR_PRODUCT VENDOR_PRODUCT_TYPE VENDOR_PRODUCT_VERSION

aqua aqua-enforcer 2022.4.20

dynatrace dynatrace 0.10.1

komodor k8s-watcher 1.15.5

kong kong-enterprise 2.27.0

accuknox kubearmor v1.3.2

kubecost cost-analyzer 2.1.0

nirmata enterprise-kyverno 1.6.10

lacework polygraph 6.11.0

newrelic nri-bundle 5.0.64

perfectscale perfectscale v0.0.38

pulumi pulumi-kubernetes-operator 0.3.0

solo.io solo-istiod 1.18.3-eks-a

sysdig sysdig-agent 1.6.3

tetrate.io tetrate-istio-distribution 1.18.1

hashicorp vault 0.25.0

vSphere provider validated partners

Kubernetes Version : 1.28

Date of Conformance Test : 2024-05-02

Following ISV Partners have Validated their Conformance :

VENDOR_PRODUCT VENDOR_PRODUCT_TYPE VENDOR_PRODUCT_VERSION

aqua aqua-enforcer 2022.4.20

dynatrace dynatrace 0.10.1

komodor k8s-watcher 1.15.5

kong kong-enterprise 2.27.0

accuknox kubearmor v1.3.2

kubecost cost-analyzer 2.1.0

nirmata enterprise-kyverno 1.6.10

lacework polygraph 6.11.0

newrelic nri-bundle 5.0.64

perfectscale perfectscale v0.0.38

pulumi pulumi-kubernetes-operator 0.3.0

solo.io solo-istiod 1.18.3-eks-a

sysdig sysdig-agent 1.6.3

tetrate.io tetrate-istio-distribution 1.18.1

hashicorp vault 0.25.0

AWS Snow provider validated partners

Kubernetes Version : 1.28

Date of Conformance Test : 2023-11-10

Following ISV Partners have Validated their Conformance :

VENDOR_PRODUCT VENDOR_PRODUCT_TYPE

dynatrace dynatrace

solo.io solo-istiod

komodor k8s-watcher

kong kong-enterprise

accuknox kubearmor

kubecost cost-analyzer

nirmata enterprise-kyverno

lacework polygraph

suse neuvector

newrelic newrelic-bundle

perfectscale perfectscale

pulumi pulumi-kubernetes-operator

sysdig sysdig-agent

hashicorp vault

2 - What's New

New EKS Anywhere releases, features, and fixes

2.1 - Changelog

Changelog for EKS Anywhere releases

Announcements

- EKS Anywhere release

v0.19.0 introduces support for creating Kubernetes version v1.29 clusters. A conformance test was promoted in Kubernetes v1.29 that verifies that Services serving different L4 protocols with the same port number can co-exist in a Kubernetes cluster. This is not supported in Cilium, the CNI deployed on EKS Anywhere clusters, because Cilium currently does not differentiate between TCP and UDP protocols for Kubernetes Services. Hence EKS Anywhere v1.29 clusters will not pass this specific conformance test. This service protocol differentiation is being tracked in an upstream Cilium issue and will be supported in a future Cilium release. A future release of EKS Anywhere will include the patched Cilium version when it is available.

Refer to the following links for more information regarding the conformance test:

- The Bottlerocket project will not be releasing bare metal variants for Kubernetes versions v1.29 and beyond. Hence Bottlerocket is not a supported operating system for creating EKS Anywhere bare metal clusters with Kubernetes versions v1.29 and above. However, Bottlerocket is still supported for bare metal clusters running Kubernetes versions v1.28 and below.

Refer to the following links for more information regarding the deprecation:

- On January 31, 2024, a High-severity vulnerability CVE-2024-21626 was published affecting all

runc versions <= v1.1.11. This CVE has been fixed in runc version v1.1.12, which has been included in EKS Anywhere release v0.18.6. In order to fix this CVE in your new/existing EKS-A cluster, you MUST build or download new OS images pertaining to version v0.18.6 and create/upgrade your cluster with these images.

Refer to the following links for more information on the steps to mitigate the CVE.

- EKS Anywhere version

v0.19.4 introduced a regression in the Curated Packages workflow due to a bug in the associated Packages controller version (v0.4.2). This will be fixed in the next patch release.

- On October 11, 2024, a security issue CVE-2024-9594 was discovered in the Kubernetes Image Builder where default credentials are enabled during the image build process when using the Nutanix, OVA, QEMU or raw providers. The credentials can be used to gain root access. The credentials are disabled at the conclusion of the image build process. Kubernetes clusters are only affected if their nodes use VM images created via the Image Builder project. Clusters using virtual machine images built with Kubernetes Image Builder

version v0.1.37 or earlier are affected if built with the Nutanix, OVA, QEMU or raw providers. These images built using previous versions of image-builder will be vulnerable only during the image build process, if an attacker was able to reach the VM where the image build was happening, login using these default credentials and modify the image at the time the image build was occurring. This CVE has been fixed in image-builder versions >= v0.1.38, which has been included in EKS Anywhere release v0.19.11.

General Information

- When upgrading to a new minor version, a new OS image must be created using the new image-builder CLI pertaining to that release.

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.20.5 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Upgraded

- EKS Distro:

- Image-builder:

v0.1.36 to v0.1.39 (CVE-2024-9594

)

- containerd:

v1.7.22 to v1.7.23

- Cilium:

v1.13.19 to v1.13.20

- etcdadm-controller:

v1.0.23 to v1.0.24

- etcdadm-bootstrap-provider:

v1.0.13 to v1.0.14

- local-path-provisioner:

v0.0.29 to v0.0.30

- runc:

v1.1.14 to v1.1.15

Fixed

- Skip hardware validation logic for InPlace upgrades. #8779

- Status reconciliation of etcdadm cluster in etcdadm-controller when etcd-machines are unhealthy. #63

- Skip generating AWS IAM Kubeconfig on cluster upgrade. #8851

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.22.0 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Upgraded

- EKS Distro:

- EKS Anywhere Packages:

v0.4.3 to v0.4.4

- Cilium:

v1.13.18 to v1.13.19

- containerd:

v1.7.20 to v1.7.22

- runc:

v1.1.13 to v1.1.14

- local-path-provisioner:

v0.0.28 to v0.0.29

- etcdadm-controller:

v1.0.22 to v1.0.23

- New base images with CVE fixes for Amazon Linux 2

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.2 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Upgraded

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.2 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Upgraded

Changed

- Added additional validation before marking controlPlane and workers ready #8455

Fixed

- Fix panic when datacenter obj is not found #8494

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.2 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Upgraded

- Cluster API Provider Nutanix:

v1.3.3 to v1.3.5

- Image Builder:

v0.1.24 to v0.1.26

- EKS Distro:

Changed

- Updated cluster status reconciliation logic for worker node groups with autoscaling

configuration #8254

- Added logic to apply new hardware on baremetal cluster upgrades #8288

Fixed

- Fixed bug when installer does not create CCM secret for Nutanix workload cluster #8191

- Fixed upgrade workflow for registry mirror certificates in EKS Anywhere packages #7114

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.2 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Changed

- Backporting dependency bumps to fix vulnerabilities #8118

- Upgraded EKS-D:

Fixed

- Fixed cluster directory being created with root ownership #8120

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.2 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Changed

- Upgraded EKS-Anywhere Packages from

v0.4.2 to v0.4.3

Fixed

- Fixed registry mirror with authentication for EKS Anywhere packages

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.2 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Changed

- Support Docs site for penultime EKS-A version #8010

- Update Ubuntu 22.04 ISO URLs to latest stable release #3114

- Upgraded EKS-D:

Fixed

- Added processor for Tinkerbell Template Config #7816

- Added nil check for eksa-version when setting etcd url #8018

- Fixed registry mirror secret credentials set to empty #7933

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.2 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Changed

- Updated helm to v3.14.3 #3050

Fixed

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.2 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Changed

- Update CAPC to 0.4.10-rc1 #3105

- Upgraded EKS-D:

Fixed

- Fixing tinkerbell action image URIs while using registry mirror with proxy cache.

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.2 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Changed

Added

- Preflight check for upgrade management components such that it ensures management components is at most 1 EKS Anywhere minor version greater than the EKS Anywhere version of cluster components #7800

.

Fixed

- EKS Anywhere package bundles

ending with 152, 153, 154, 157 have image tag issues which have been resolved in bundle 158. Example for kubernetes version v1.29 we have

public.ecr.aws/eks-anywhere/eks-anywhere-packages-bundles:v1-29-158

- Fixed InPlace custom resources from being created again after a successful node upgrade due to delay in objects in client cache #7779

.

- Fixed #7623

by encoding the basic auth credentials to base64 when using them in templates #7829

.

- Added a fix

for error that may occur during upgrading management components where if the cluster object is modified by another process before applying, it throws the conflict error prompting a retry.

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.0 |

✔ |

* |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

✔ |

— |

* EKS Anywhere issue regarding deprecation of Bottlerocket bare metal variants

Added

- Support for Kubernetes v1.29

- Support for in-place EKS Anywhere and Kubernetes version upgrades on Bare Metal clusters

- Support for horizontally scaling

etcd count in clusters with external etcd deployments (#7127

)

- External

etcd support for Nutanix (#7550

)

- Etcd encryption for Nutanix (#7565

)

- Nutanix Cloud Controller Manager integration (#7534

)

- Enable image signing for all images used in cluster operations

- RedHat 9 support for CloudStack (#2842

)

- New

upgrade management-components command which upgrades management components independently of cluster components (#7238

)

- New

upgrade plan management-components command which provides new release versions for the next management components upgrade (#7447

)

- Make

maxUnhealthy count configurable for control plane and worker machines (#7281

)

Changed

- Unification of controller and CLI workflows for cluster lifecycle operations such as create, upgrade, and delete

- Perform CAPI Backup on workload cluster during upgrade(#7364

)

- Extend

maxSurge and maxUnavailable configuration support to all providers

- Upgraded Cilium to v1.13.19

- Upgraded EKS-D:

- Cluster API Provider AWS Snow:

v0.1.26 to v0.1.27

- Cluster API:

v1.5.2 to v1.6.1

- Cluster API Provider vSphere:

v1.7.4 to v1.8.5

- Cluster API Provider Nutanix:

v1.2.3 to v1.3.1

- Flux:

v2.0.0 to v2.2.3

- Kube-vip:

v0.6.0 to v0.7.0

- Image-builder:

v0.1.19 to v0.1.24

- Kind:

v0.20.0 to v0.22.0

Removed

Fixed

- Validate OCI namespaces for registry mirror on Bottlerocket (#7257

)

- Make Cilium reconciler use provider namespace when generating network policy (#7705

)

- EKS Anywhere v0.18.7 Admin AMI with CVE fixes for Amazon Linux 2

Supported Operating Systems

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.0 |

✔ |

✔ |

— |

— |

— |

| RHEL 8.7 |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

— |

— |

- EKS Anywhere v0.18.6 Admin AMI with CVE fixes for

runc

- New base images with CVE fixes for Amazon Linux 2

- Bottlerocket

v1.15.1 to 1.19.0

- runc

v1.1.10 to v1.1.12 (CVE-2024-21626

)

- containerd

v1.7.11 to v.1.7.12

Supported Operating Systems

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.19.0 |

✔ |

✔ |

— |

— |

— |

| RHEL 8.x |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

— |

— |

- New EKS Anywhere Admin AMI with CVE fixes for Amazon Linux 2

- New base images with CVE fixes for Amazon Linux 2

Supported Operating Systems

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.15.1 |

✔ |

✔ |

— |

— |

— |

| RHEL 8.7 |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

— |

— |

Feature

- Nutanix: Enable api-server audit logging for Nutanix (#2664

)

Bug

- CNI reconciler now properly pulls images from registry mirror instead of public ECR in airgapped environments: #7170

Supported Operating Systems

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.15.1 |

✔ |

✔ |

— |

— |

— |

| RHEL 8.7 |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

— |

— |

Fixed

- Etcdadm: Renew client certificates when nodes rollover (etcdadm/#56

)

- Include DefaultCNIConfigured condition in Cluster Ready status except when Skip Upgrades is enabled (#7132

)

- EKS Distro (Kubernetes):

v1.25.15 to v1.25.16v1.26.10 to v1.26.11v1.27.7 to v1.27.8v1.28.3 to v1.28.4

- Etcdadm Controller:

v1.0.15 to v1.0.16

Supported Operating Systems

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.15.1 |

✔ |

✔ |

— |

— |

— |

| RHEL 8.7 |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

— |

— |

Fixed

- Image Builder: Correctly parse

no_proxy inputs when both Red Hat Satellite and Proxy is used in image-builder. (#2664

)

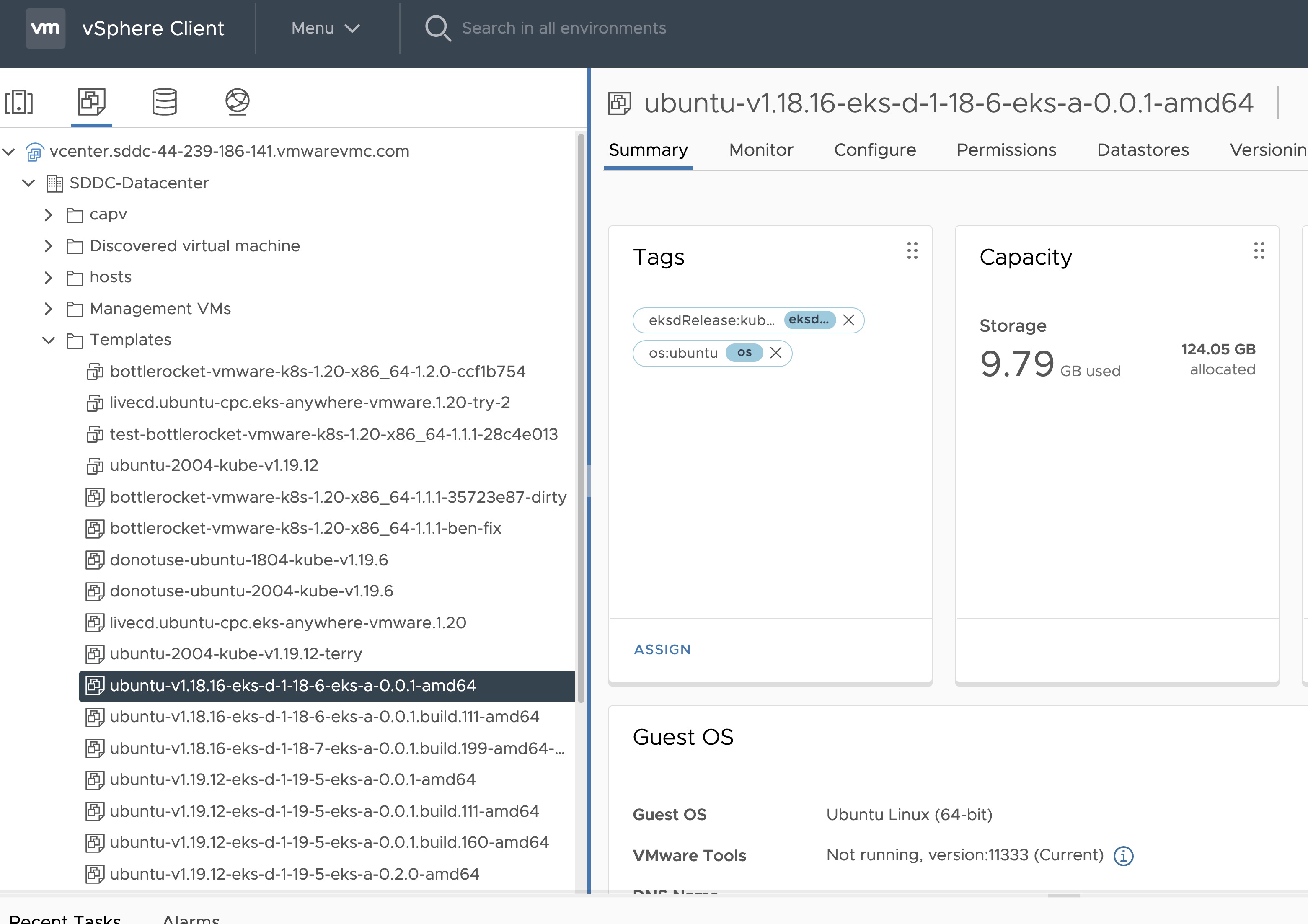

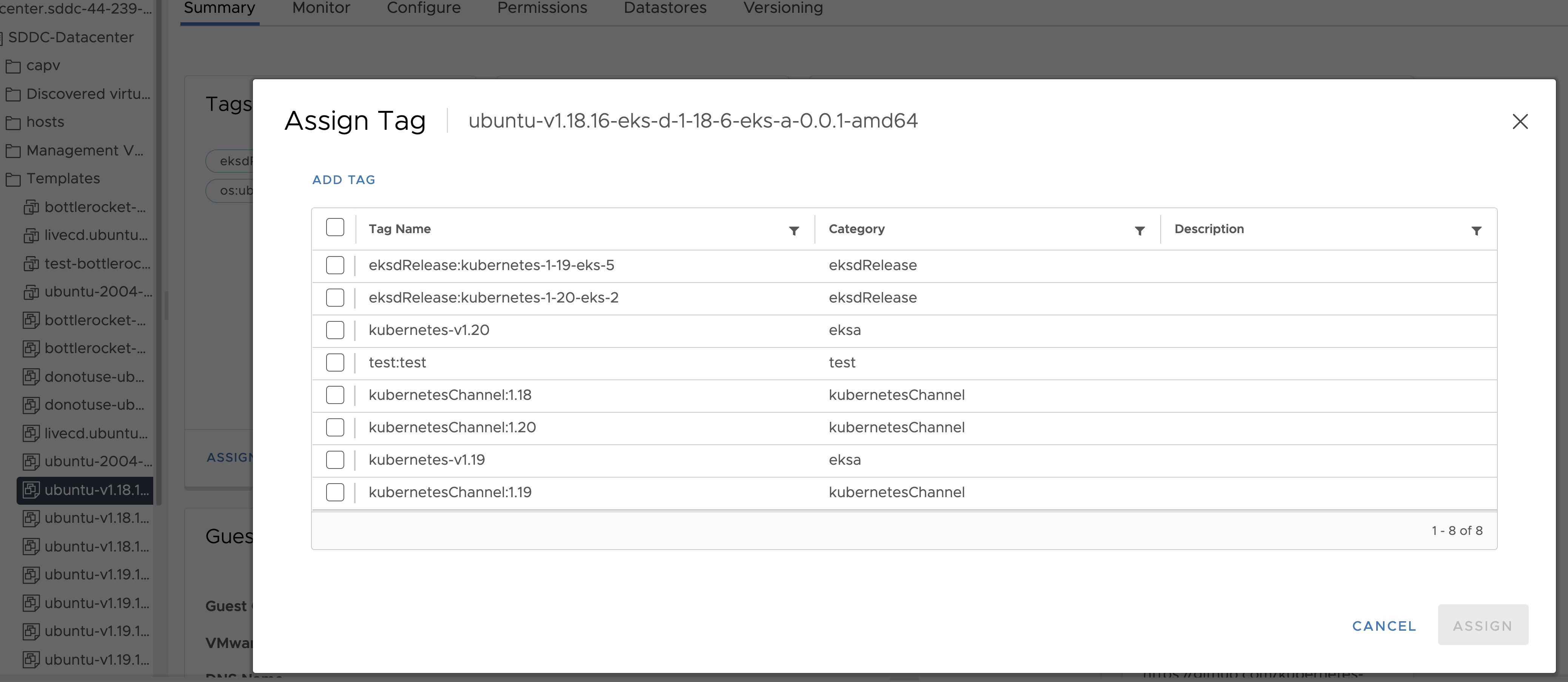

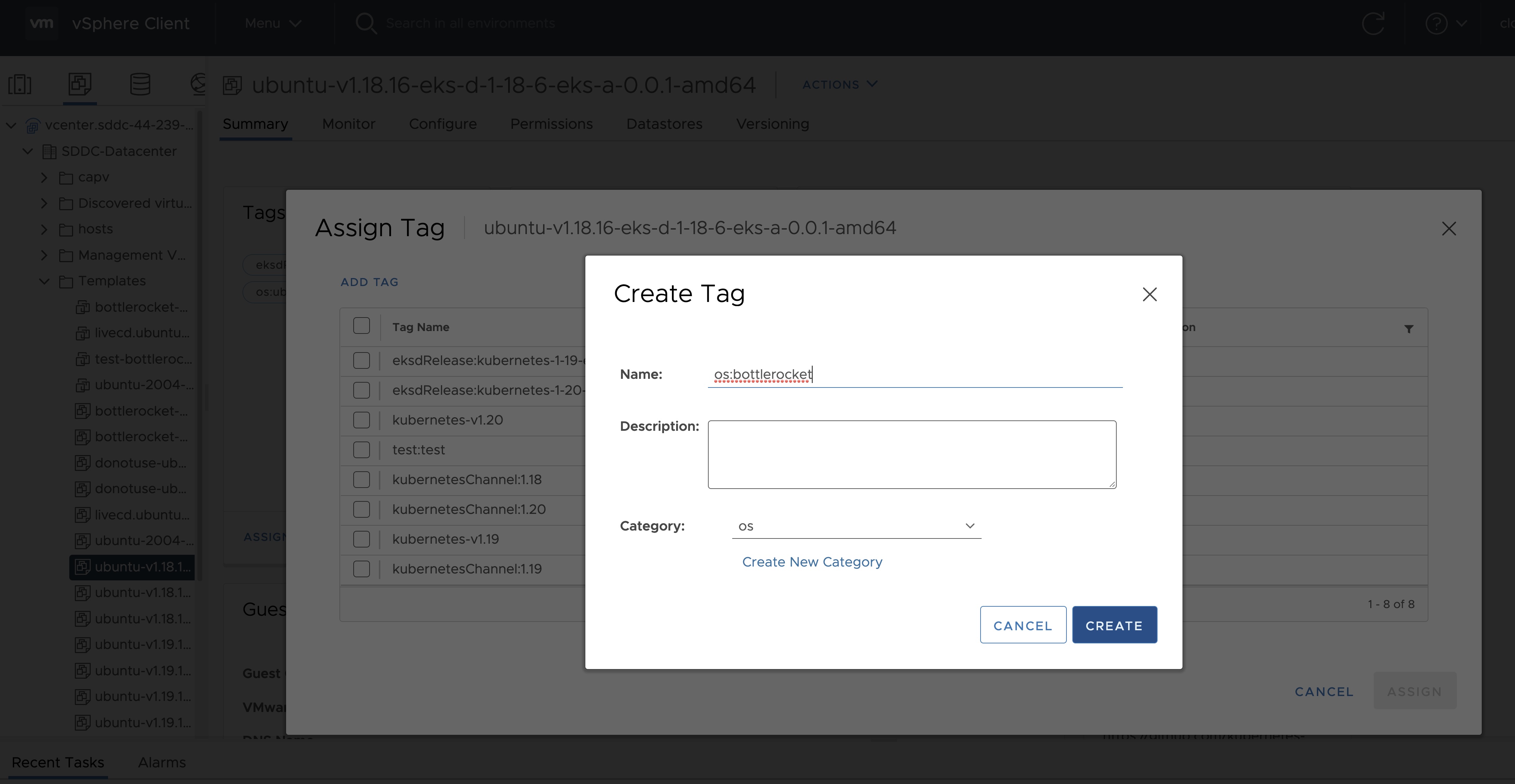

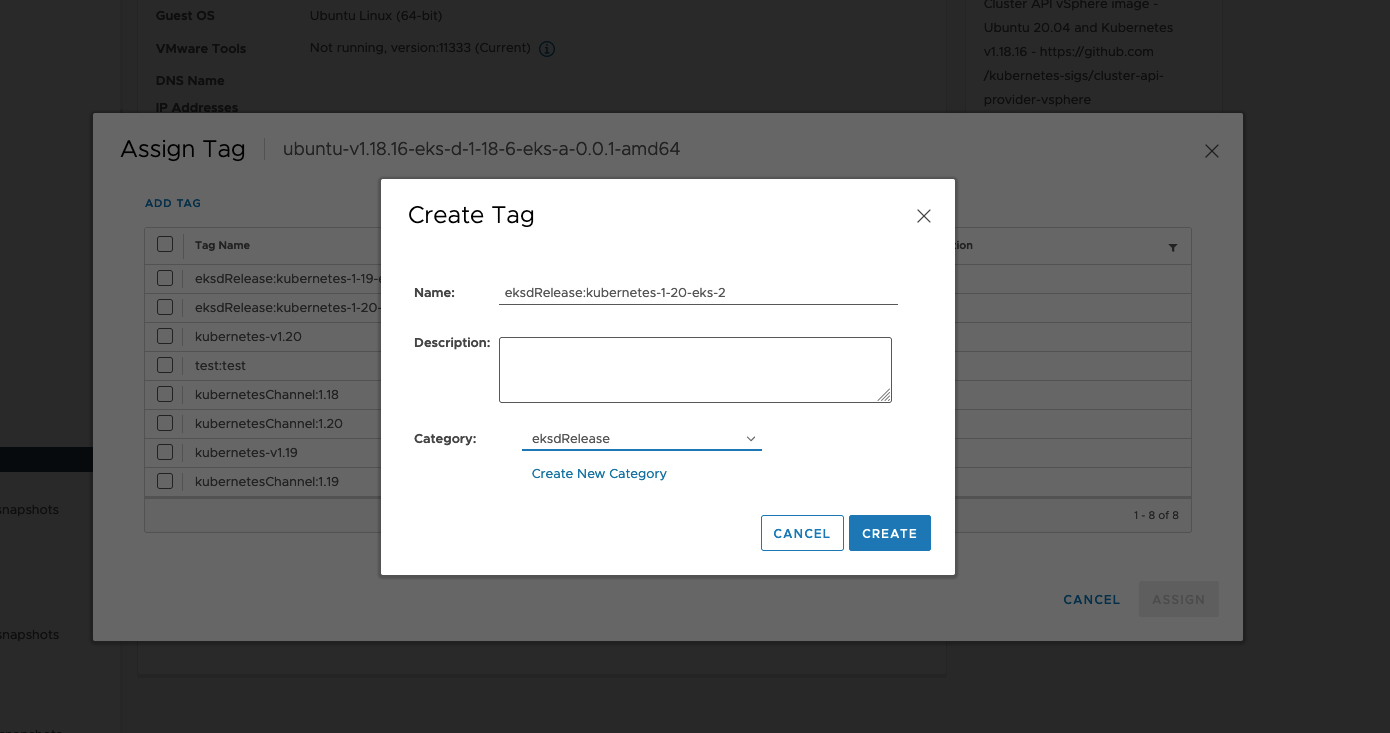

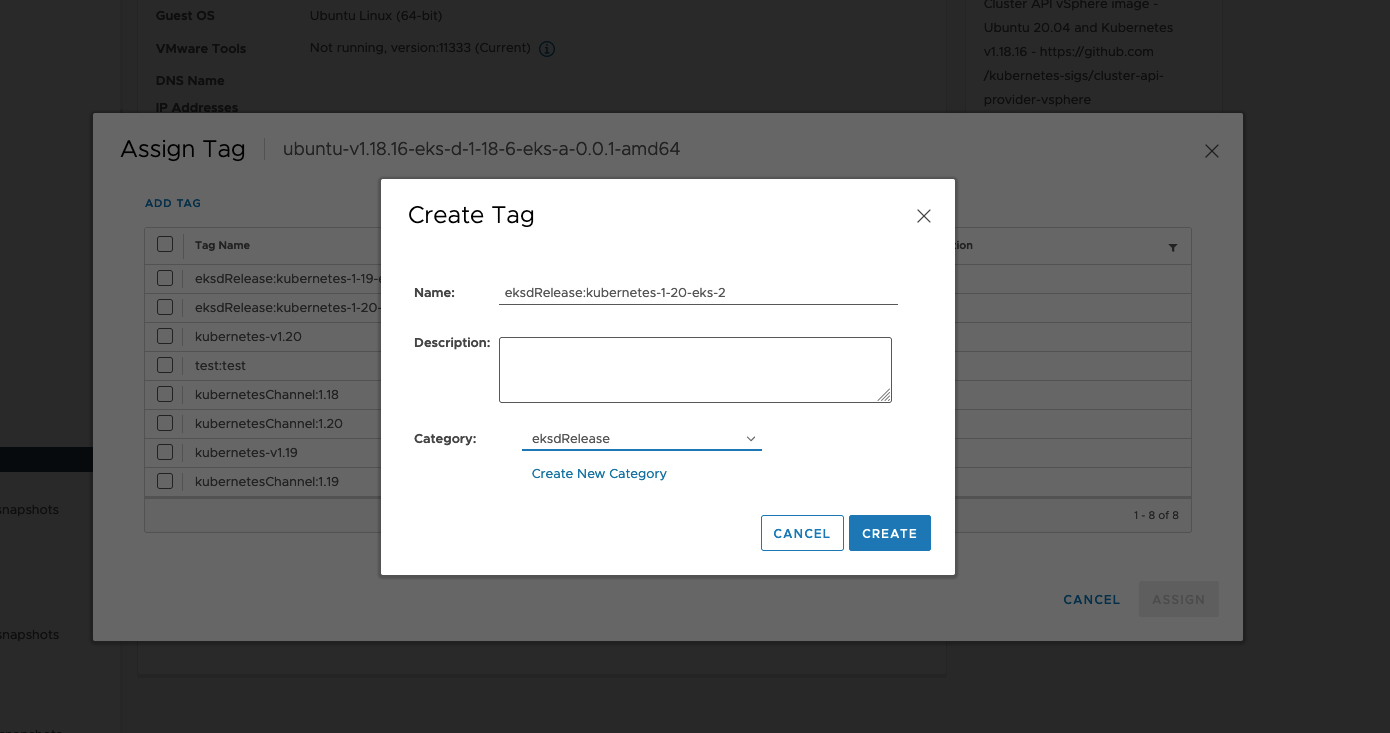

- vSphere: Fix template tag validation by specifying the full template path (#6437

)

- Bare Metal: Skip

kube-vip deployment when TinkerbellDatacenterConfig.skipLoadBalancerDeployment is set to true. (#6990

)

Other

- Security: Patch incorrect conversion between uint64 and int64 (#7048

)

- Security: Fix incorrect regex for matching curated package registry URL (#7049

)

- Security: Patch malicious tarballs directory traversal vulnerability (#7057

)

Supported Operating Systems

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.15.1 |

✔ |

✔ |

— |

— |

— |

| RHEL 8.7 |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

— |

— |

- EKS Distro (Kubernetes):

v1.25.14 to v1.25.15v1.26.9 to v1.26.10v1.27.6 to v1.27.7v1.28.2 to v1.28.3

- Etcdadm Bootstrap Provider:

v1.0.9 to v1.0.10

- Etcdadm Controller:

v1.0.14 to v1.0.15

- Cluster API Provider CloudStack:

v0.4.9-rc7 to v0.4.9-rc8

- EKS Anywhere Packages Controller :

v0.3.12 to v0.3.13

Bug

- Bare Metal: Ensure the Tinkerbell stack continues to run on management clusters when worker nodes are scaled to 0 (#2624

)

Supported Operating Systems

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.15.1 |

✔ |

✔ |

— |

— |

— |

| RHEL 8.7 |

✔ |

✔ |

✔ |

✔ |

— |

| RHEL 9.x |

— |

— |

✔ |

— |

— |

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu |

20.04 |

20.04 |

20.04 |

Not supported |

20.04 |

|

22.04 |

22.04 |

22.04 |

Not supported |

Not supported |

| Bottlerocket |

1.15.1 |

1.15.1 |

Not supported |

Not supported |

Not supported |

| RHEL |

8.7 |

8.7 |

9.x, 8.7 |

8.7 |

Not supported |

Added

- Etcd encryption for CloudStack and vSphere: #6557

- Generate TinkerbellTemplateConfig command: #3588

- Support for modular Kubernetes version upgrades with bare metal: #6735

- OSImageURL added to Tinkerbell Machine Config

- Bare metal out-of-band webhook: #5738

- Support for Kubernetes v1.28

- Support for air gapped image building: #6457

- Support for RHEL 8 and RHEL 9 for Nutanix provider: #6822

- Support proxy configuration on Redhat image building #2466

- Support Redhat Satellite in image building #2467

Changed

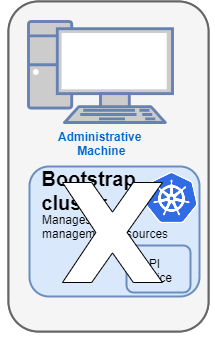

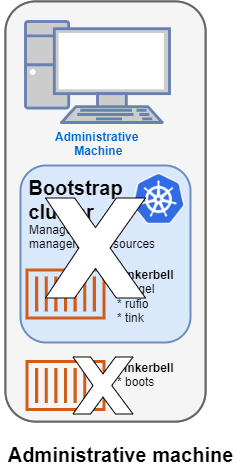

- KinD-less upgrades: #6622

- Management cluster upgrades don’t require a local bootstrap cluster anymore.

- The control plane of management clusters can be upgraded through the API. Previously only changes to the worker nodes were allowed.

- Increased control over upgrades by separating external etcd reconciliation from control plane nodes: #6496

- Upgraded Cilium to 1.12.15

- Upgraded EKS-D:

- Cluster API Provider CloudStack:

v0.4.9-rc6 to v0.4.9-rc7

- Cluster API Provider AWS Snow:

v0.1.26 to v0.1.27

- Upgraded CAPI to

v1.5.2

Removed

- Support for Kubernetes v1.23

Fixed

- Fail on

eksctl anywhere upgrade cluster plan -f: #6716

- Error out when management kubeconfig is not present for workload cluster operations: 6501

- Empty vSphereMachineConfig users fails CLI upgrade: 5420

- CLI stalls on upgrade with Flux Gitops: 6453

Bug

- CNI reconciler now properly pulls images from registry mirror instead of public ECR in airgapped environments: #7170

- Waiting for control plane to be fully upgraded: #6764

Other

- Check for k8s version in the Cloudstack template name: #7130

Supported Operating Systems

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.14.3 |

✔ |

✔ |

— |

— |

— |

| RHEL 8.7 |

✔ |

✔ |

_ |

✔ |

— |

- Cluster API Provider CloudStack:

v0.4.9-rc7 to v0.4.9-rc8

Supported Operating Systems

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu 20.04 |

✔ |

✔ |

✔ |

— |

✔ |

| Ubuntu 22.04 |

✔ |

✔ |

✔ |

— |

— |

| Bottlerocket 1.14.3 |

✔ |

✔ |

— |

— |

— |

| RHEL 8.7 |

✔ |

✔ |

_ |

✔ |

— |

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu |

20.04 |

20.04 |

20.04 |

Not supported |

20.04 |

|

22.04 |

22.04 |

22.04 |

Not supported |

Not supported |

| Bottlerocket |

1.14.3 |

1.14.3 |

Not supported |

Not supported |

Not supported |

| RHEL |

8.7 |

8.7 |

Not supported |

8.7 |

Not supported |

Added

- Enabled audit logging for

kube-apiserver on baremetal provider (#6779

).

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu |

20.04 |

20.04 |

20.04 |

Not supported |

20.04 |

|

22.04 |

22.04 |

22.04 |

Not supported |

Not supported |

| Bottlerocket |

1.14.3 |

1.14.3 |

Not supported |

Not supported |

Not supported |

| RHEL |

8.7 |

8.7 |

Not supported |

8.7 |

Not supported |

Fixed

- Fixed cli upgrade mgmt kubeconfig flag (#6666

)

- Ignore node taints when scheduling Cilium preflight daemonset (#6697

)

- Baremetal: Prevent bare metal machine config references from changing to existing machine configs (#6674

)

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu |

20.04 |

20.04 |

20.04 |

Not supported |

20.04 |

|

22.04 |

22.04 |

22.04 |

Not supported |

Not supported |

| Bottlerocket |

1.14.0 |

1.14.0 |

Not supported |

Not supported |

Not supported |

| RHEL |

8.7 |

8.7 |

Not supported |

8.7 |

Not supported |

Fixed

- Bare Metal: Ensure new worker node groups can reference new machine configs (#6615

)

- Bare Metal: Fix

writefile action to ensure Bottlerocket configs write content or error (#2441

)

Added

- Added support for configuring healthchecks on EtcdadmClusters using

etcdcluster.cluster.x-k8s.io/healthcheck-retries annotation (aws/etcdadm-controller#44

)

- Add check for making sure quorum is maintained before deleting etcd machines (aws/etcdadm-controller#46

)

Changed

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu |

20.04 |

20.04 |

20.04 |

Not supported |

20.04 |

|

22.04 |

22.04 |

22.04 |

Not supported |

Not supported |

| Bottlerocket |

1.14.0 |

1.14.0 |

Not supported |

Not supported |

Not supported |

| RHEL |

8.7 |

8.7 |

Not supported |

8.7 |

Not supported |

Fixed

- Fix worker node groups being rolled when labels adjusted #6330

- Fix worker node groups being rolled out when taints are changed #6482

- Fix vSphere template tags validation to run on the control plane and etcd

VSpherMachinesConfig #6591

- Fix Bare Metal upgrade with custom pod CIDR #6442

Added

- Add validation for missing management cluster kubeconfig during workload cluster operations #6501

Supported OS version details

|

vSphere |

Bare Metal |

Nutanix |

CloudStack |

Snow |

| Ubuntu |

20.04 |

20.04 |

20.04 |

Not supported |

20.04 |

|

22.04 |

22.04 |

22.04 |

Not supported |

Not supported |

| Bottlerocket |

1.14.0 |

1.14.0 |

Not supported |

Not supported |

Not supported |

| RHEL |

8.7 |

8.7 |

Not supported |

8.7 |

Not supported |

Note: We have updated the image-builder docs to include the latest enhancements. Please refer to the image-builder docs

for more details.

Added

- Add support for AWS CodeCommit repositories in FluxConfig with git configuration #4290

- Add new information to the EKS Anywhere Cluster status #5628

:

- Add the

ControlPlaneInitialized, ControlPlaneReady, DefaultCNIConfigured, WorkersReady, and Ready conditions.

- Add the

observedGeneration field.

- Add the

failureReason field.

- Add support for different machine templates for control plane, etcd, and worker node in vSphere provider #4255

- Add support for different machine templates for control plane, etcd, and worker node in Cloudstack provider #6291

- Add support for Kubernetes version 1.25, 1.26, and 1.27 to CloudStack provider #6167

- Add bootstrap cluster backup in the event of cluster upgrade error #6086

- Add support for organizing virtual machines into categories with the Nutanix provider #6014

- Add support for configuring

egressMasqueradeInterfaces option in Cilium CNI via EKS Anywhere cluster spec #6018

- Add support for a flag for create and upgrade cluster to skip the validation

--skip-validations=vsphere-user-privilege

- Add support for upgrading control plane nodes separately from worker nodes for vSphere, Nutanix, Snow, and Cloudstack providers #6180

- Add preflight validation to prevent skip eks-a minor version upgrades #5688

- Add preflight check to block using kindnetd CNI in all providers except Docker [#6097]https://github.com/aws/eks-anywhere/issues/6097

- Added feature to configure machine health checks for API managed clusters and a new way to configure health check timeouts via the EKKSA spec. [#6176]https://github.com/aws/eks-anywhere/pull/6176

Upgraded

- Cluster API Provider vSphere:

v1.6.1 to v1.7.0

- Cluster API Provider Cloudstack:

v0.4.9-rc5 to v0.4.9-rc6

- Cluster API Provider Nutanix:

v1.2.1 to v1.2.3

Cilium Upgrades

-

Cilium: v1.11.15 to v1.12.11

Note: If you are using the vSphere provider with the Redhat OS family, there is a known issue with VMWare and the new Cilium version that only affects our Redhat variants. To prevent this from affecting your upgrade from EKS Anywhere v0.16 to v0.17, we are adding a temporary daemonset to disable UDP offloading on the nodes before upgrading Cilium. After your cluster is upgraded, the daemonset will be deleted. This note is strictly informational as this change requires no additional effort from the user.

Changed

- Change the default node startup timeout from 10m to 20m in Bare Metal provider #5942

- EKS Anywhere now fails on pre-flights if a user does not have required permissions. #5865

eksaVersion field in the cluster spec is added for better representing CLI version and dependencies in EKS-A cluster #5847

- vSphere datacenter insecure and thumbprint is now mutable for upgrades when using full lifecycle API [6143]https://github.com/aws/eks-anywhere/issues/6143

Fixed

- Fix cluster creation failure when the

<Provider>DatacenterConfig is missing apiVersion field #6096

- Allow registry mirror configurations to be mutable for Bottlerocket OS #2336

- Patch an issue where mutable fields in the EKS Anywhere CloudStack API failed to trigger upgrades #5910

- image builder: Fix runtime issue with git in image-builder v0.16.2 binary #2360

- Bare Metal: Fix issue where metadata requests that return non-200 responses were incorrectly treated as OK #2256

Known Issues:

- Upgrading Docker clusters from previous versions of EKS Anywhere may not work on Linux hosts due to an issue in the Cilium 1.11 to 1.12 upgrade. Docker clusters is meant solely for testing and not recommended or support for production use cases. There is currently no fixed planned.

- If you are installing EKS Anywhere Packages, Kubernetes versions 1.23-1.25 are incompatible with Kubernetes versions 1.26-1.27 due to an API difference. This means that you may not have worker nodes on Kubernetes version <= 1.25 when the control plane nodes are on Kubernetes version >= 1.26. Therefore, if you are upgrading your control plane nodes to 1.26, you must upgrade all nodes to 1.26 to avoid failures.

- There is a known bug

with systemd >= 249 and all versions of Cilium. This is currently known to only affect Ubuntu 22.04. This will be fixed in future versions of EKS Anywhere. To work around this issue, run one of the follow options on all nodes.

Option A

# Does not persist across reboots.

sudo ip rule add from all fwmark 0x200/0xf00 lookup 2004 pref 9

sudo ip rule add from all fwmark 0xa00/0xf00 lookup 2005 pref 10

sudo ip rule add from all lookup local pref 100

Option B

# Does persist across reboots.

# Add these values /etc/systemd/networkd.conf

[Network]

ManageForeignRoutes=no

ManageForeignRoutingPolicyRules=no

Deprecated

- The bundlesRef field in the cluster spec is now deprecated in favor of the new

eksaVersion field. This field will be deprecated in three versions.

Removed

- Installing vSphere CSI Driver as part of vSphere cluster creation. For more information on how to self-install the driver refer to the documentation here

⚠️ Breaking changes

- CLI:

--force-cleanup has been removed from create cluster, upgrade cluster and delete cluster commands. For more information on how to troubleshoot issues with the bootstrap cluster refer to the troubleshooting guide (1

and 2

). #6384

Changed

- Bump up the worker count for etcdadm-controller from 1 to 10 #34

- Add 2X replicas hard limit for rolling out new etcd machines #37

Fixed

- Fix code panic in healthcheck loop in etcdadm-controller #41

- Fix deleting out of date machines in etcdadm-controller #40

Fixed

- Fix support for having management cluster and workload cluster in different namespaces #6414

Changed

- During management cluster upgrade, if the backup of CAPI objects of all workload clusters attached to the management cluster fails before upgrade starts, EKS Anywhere will only backup the management cluster #6360

- Update kubectl wait retry policy to retry on TLS handshake errors #6373

Removed

- Removed the validation for checking management cluster bundle compatibility on create/upgrade workload cluster #6365

Fixes

- CLI: Ensure importing packages and bundles honors the insecure flag #6056

- vSphere: Fix credential configuration when using the full lifecycle controller #6058

- Bare Metal: Fix handling of Hardware validation errors in Tinkerbell full lifecycle cluster provisioning #6091

- Bare Metal: Fix parsing of bare metal cluster configurations containing embedded PEM certs #6095

Upgrades

- AWS Cloud Provider: v1.27.0 to v1.27.1

- EKS Distro:

- Kubernetes v1.24.13 to v1.24.15

- Kubernetes v1.25.9 to v1.25.11

- Kubernetes v1.26.4 to v1.26.6

- Kubernetes v1.27.1 to v1.27.3

- Cluster API Provider Snow: v0.1.25 to v0.1.26

Added

- Workload clusters full lifecycle API support for CloudStack provider (#2754

)

- Enable proxy configuration for Bare Metal provider (#5925

)

- Kubernetes 1.27 support (#5929

)

- Support for upgrades for clusters with pod disruption budgets (#5697

)

- BottleRocket network config uses mac addresses instead of interface names for configuring interfaces for the Bare Metal provider (#3411

)

- Allow users to configure additional BottleRocket settings

- kernel sysctl settings (#5304

)

- boot kernel parameters (#5359

)

- custom trusted cert bundles (#5625

)

- Add support for IRSA on Nutanix (#5698

)

- Add support for aws-iam-authenticator on Nutanix (#5698

)

- Enable proxy configuration for Nutanix (#5779

)

Upgraded

- Management cluster upgrades will only move management cluster’s components to bootstrap cluster and back. (#5914

)

Fixed

- CloudStack control plane host port is only defaulted in CAPI objects if not provided. (#5792

) (#5736

)

Deprecated

- Add warning to deprecate disableCSI through CLI (#5918

). Refer to the deprecation section

in the vSphere provider documentation for more information.

Removed

Fixed

- Add validation for tinkerbell ip for workload cluster to match management cluster (#5798

)

- Update datastore usage validation to account for space that will free up during upgrade (#5524

)

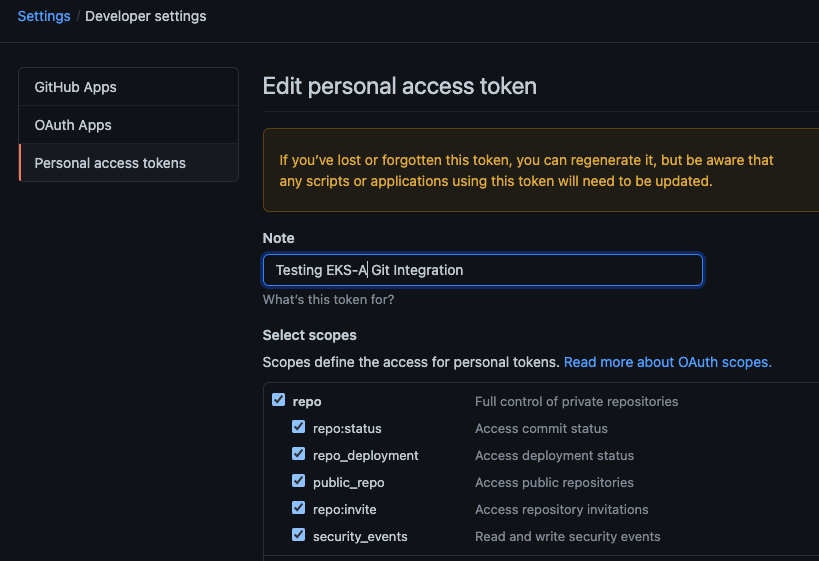

- Expand GITHUB_TOKEN regex to support fine-grained access tokens (#5764

)

- Display the timeout flags in CLI help (#5637

)

Added

- Added bundles-override to package cli commands (#5695

)

Fixed

Supported OS version details

|

vSphere |

Baremetal |

Nutanix |

Cloudstack |

Snow |

| Ubuntu |

20.04 |

20.04 |

20.04 |

Not supported |

20.04 |

| Bottlerocket |

1.13.1 |

1.13.1 |

Not supported |

Not supported |

Not supported |

| RHEL |

8.7 |

8.7 |

Not supported |

8.7 |

Not supported |

Added

- Support for no-timeouts to more EKS Anywhere operations (#5565

)

Changed

- Use kubectl for kube-proxy upgrader calls (#5609

)

Fixed

- Fixed the failure to delete a Tinkerbell workload cluster due to an incorrect SSH key update during reconciliation (#5554

)

- Fixed

machineGroupRef updates for CloudStack and Vsphere (#5313

)

Supported OS version details

|

vSphere |

Baremetal |

Nutanix |

Cloudstack |

Snow |

| Ubuntu |

20.04 |

20.04 |

20.04 |

Not supported |

20.04 |

| Bottlerocket |

1.13.1 |

1.13.1 |

Not supported |

Not supported |

Not supported |

| RHEL |

8.7 |

8.7 |

Not supported |

8.7 |

Not supported |

Added

Upgraded

- Cilium updated from version

v1.11.10 to version v1.11.15

Fixed

- Fix http client in file reader to honor the provided HTTP_PROXY, HTTPS_PROXY and NO_PROXY env variables (#5488

)

Supported OS version details

|

vSphere |

Baremetal |

Nutanix |

Cloudstack |

Snow |

| Ubuntu |

20.04 |

20.04 |

20.04 |

Not supported |

20.04 |

| Bottlerocket |

1.13.1 |

1.13.1 |

Not supported |

Not supported |

Not supported |

| RHEL |

8.7 |

8.7 |

Not supported |

8.7 |

Not supported |

Added

- Workload clusters full lifecycle API support for Bare Metal provider (#5237

)

- IRSA support for Bare Metal (#4361

)

- Support for mixed disks within the same node grouping for BareMetal clusters (#3234

)

- Workload clusters full lifecycle API support for Nutanix provider (#5190

)

- OIDC support for Nutanix (#4711

)

- Registry mirror support for Nutanix (#5236

)

- Support for linking EKS Anywhere node VMs to Nutanix projects (#5266

)

- Add

CredentialsRef to NutanixDatacenterConfig to specify Nutanix credentials for workload clusters (#5114

)

- Support for taints and labels for Nutanix provider (#5172

)

- Support for InsecureSkipVerify for RegistryMirrorConfiguration across all providers. Currently only supported for Ubuntu and RHEL OS. (#1647

)

- Support for configuring of Bottlerocket settings. (#707

)

- Support for using a custom CNI (#5217

)

- Ability to configure NTP servers on EKS Anywhere nodes for vSphere and Tinkerbell providers (#4760

)

- Support for nonRootVolumes option in SnowMachineConfig (#5199

)

- Validate template disk size with vSphere provider using Bottlerocket (#1571

)

- Allow users to specify

cloneMode for different VSphereMachineConfig (#4634

)

- Validate management cluster bundles version is the same or newer than bundle version used to upgrade a workload cluster(#5105

)

- Set hostname for Bottlerocket nodes (#3629

)

- Curated Package controller as a package (#831

)

- Curated Package Credentials Package (#829

)

- Enable Full Cluster Lifecycle for curated packages (#807

)

- Curated Package Controller Configuration in Cluster Spec (#5031

)

Upgraded

- Bottlerocket upgraded from

v1.13.0 to v1.13.1

- Upgrade EKS Anywhere admin AMI to Kernel 5.15

- Tinkerbell stack upgraded (#3233

):

- Cluster API Provider Tinkerbell

v0.4.0

- Hegel

v0.10.1

- Rufio

v0.2.1

- Tink

v0.8.0

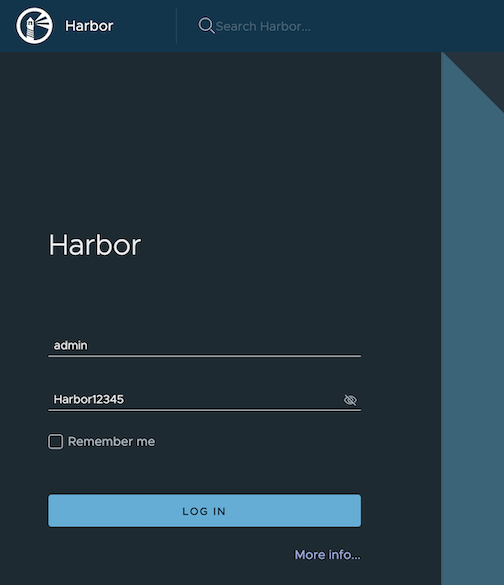

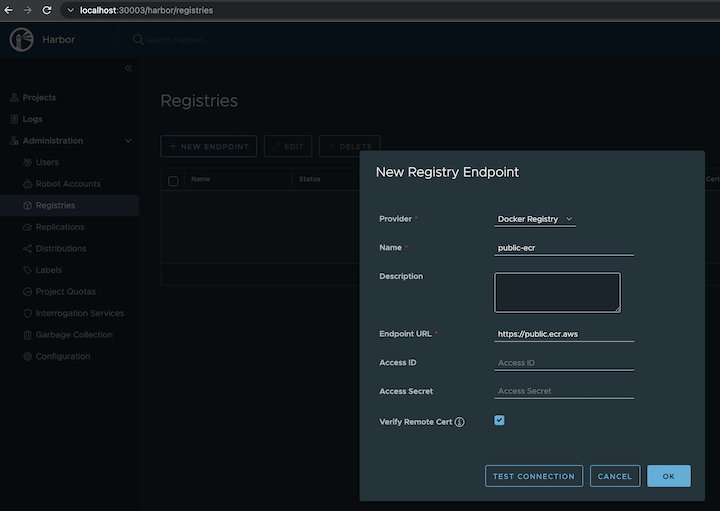

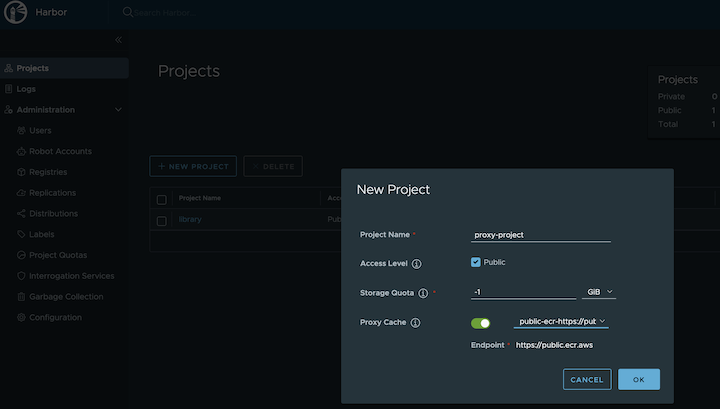

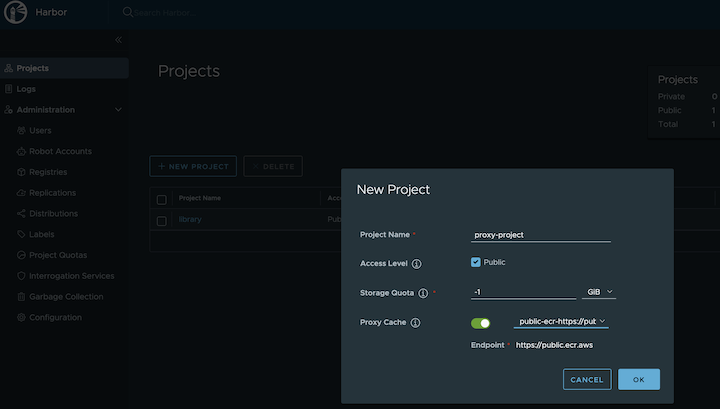

- Curated Package Harbor upgraded from

2.5.1 to 2.7.1

- Curated Package Prometheus upgraded from

2.39.1 to 2.41.0

- Curated Package Metallb upgraded from

0.13.5 to 0.13.7

- Curated Package Emissary upgraded from

3.3.0 to 3.5.1

Fixed

- Applied a patch that fixes vCenter sessions leak (#1767

)

Breaking changes

- Removed support for Kubernetes 1.21

Fixed

- Fix clustermanager no-timeouts option (#5445

)

Fixed

- Fix kubectl get call to point to full API name (#5342

)

- Expand all kubectl calls to fully qualified names (#5347

)

Added

--no-timeouts flag in create and upgrade commands to disable timeout for all wait operations- Management resources backup procedure with clusterctl

Added

--aws-region flag to copy packages command.

Upgraded

- CAPAS from

v0.1.22 to v0.1.24.

Added

- Enabled support for Kubernetes version 1.25

Added

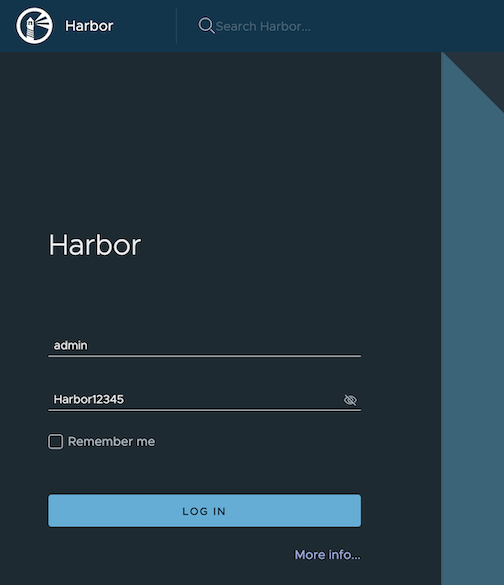

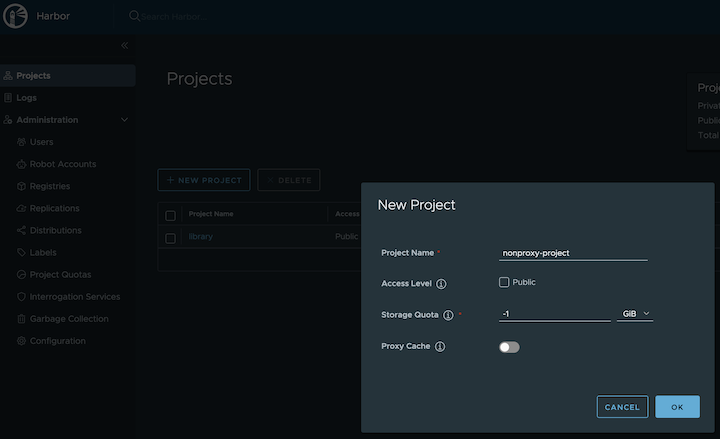

- support for authenticated pulls from registry mirror (#4796

)

- option to override default nodeStartupTimeout in machine health check (#4800

)

- Validate control plane endpoint with pods and services CIDR blocks(#4816

)

Fixed

- Fixed a issue where registry mirror settings weren’t being applied properly on Bottlerocket nodes for Tinkerbell provider

Added

- Add support for EKS Anywhere on AWS Snow (#1042

)

- Static IP support for BottleRocket (#4359

)

- Add registry mirror support for curated packages

- Add copy packages command (#4420

)

Fixed

- Improve management cluster name validation for workload clusters

Added

- Multi-region support for all supported curated packages

Fixed

- Fixed nil pointer in

eksctl anywhere upgrade plan command

Added

- Workload clusters full lifecycle API support for vSphere and Docker (#1090

)

- Single node cluster support for Bare Metal provider

- Cilium updated to version

v1.11.10

- CLI high verbosity log output is automatically included in the support bundle after a CLI

cluster command error (#1703

implemented by #4289

)

- Allow to configure machine health checks timeout through a new flag

--unhealthy-machine-timeout (#3918

implemented by #4123

)

- Ability to configure rolling upgrade for Bare Metal and Cloudstack via

maxSurge and maxUnavailable parameters

- New Nutanix Provider

- Workload clusters support for Bare Metal

- VM Tagging support for vSphere VM’s created in the cluster (#4228

)

- Support for new curated packages:

- Updated curated packages' versions:

- ADOT

v0.23.0 upgraded from v0.21.1

- Emissary

v3.3.0 upgraded from v3.0.0

- Metallb

v0.13.7 upgraded from v0.13.5

- Support for packages controller to create target namespaces #601

- (For more EKS Anywhere packages info: v0.13.0

)

Fixed

- Kubernetes version upgrades from 1.23 to 1.24 for Docker clusters (#4266

)

- Added missing docker login when doing authenticated registry pulls

Breaking changes

- Removed support for Kubernetes 1.20

Added

- Add support for Kubernetes 1.24 (CloudStack support to come in future releases)#3491

Fixed

- Fix authenticated registry mirror validations

- Fix capc bug causing orphaned VM’s in slow environments

- Bundle activation problem for package controller

Changed

- Setting minimum wait time for nodes and machinedeployments (#3868, fixes #3822)

Fixed

- Fixed worker node count pointer dereference issue (#3852)

- Fixed eks-anywhere-packages reference in go.mod (#3902)

- Surface dropped error in Cloudstack validations (#3832)

⚠️ Breaking changes

- Certificates signed with SHA-1 are not supported anymore for Registry Mirror. Users with a registry mirror and providing a custom CA cert will need to rotate the certificate served by the registry mirror endpoint before using the new EKS-A version. This is true for both new clusters (

create cluster command) and existing clusters (upgrade cluster command).

- The

--source option was removed from several package commands. Use either --kube-version for registry or --cluster for cluster.

Added

- Add support for EKS Anywhere with provider CloudStack

- Add support to upgrade Bare Metal cluster

- Add support for using Registry Mirror for Bare Metal

- Redhat-based node image support for vSphere, CloudStack and Bare Metal EKS Anywhere clusters

- Allow authenticated image pull using Registry Mirror for Ubuntu on vSphere cluster

- Add option to disable vSphere CSI driver #3148

- Add support for skipping load balancer deployment for Bare Metal so users can use their own load balancers #3608

- Add support to configure aws-iam-authenticator on workload clusters independent of management cluster #2814

- Add EKS Anywhere Packages support for remote management on workload clusters. (For more EKS Anywhere packages info: v0.12.0

)

- Add new EKS Anywhere Packages

- AWS Distro for OpenTelemetry (ADOT)

- Cert Manager

- Cluster Autoscaler

- Metrics Server

Fixed

- Remove special cilium network policy with

policyEnforcementMode set to always due to lack of pod network connectivity for vSphere CSI

- Fixed #3391

#3560

for AWSIamConfig upgrades on EKS Anywhere workload clusters

Added

- Add validate session permission for vsphere

Fixed

- Fix datacenter naming bug for vSphere #3381

- Fix os family validation for vSphere

- Fix controller overwriting secret for vSphere #3404

- Fix unintended rolling upgrades when upgrading from an older EKS-A version for CloudStack

Added

- Add some bundleRef validation

- Enable kube-rbac-proxy on CloudStack cluster controller’s metrics port

Fixed

- Fix issue with fetching EKS-D CRDs/manifests with retries

- Update BundlesRef when building a Spec from file

- Fix worker node upgrade inconsistency in Cloudstack

Added

- Add a preflight check to validate vSphere user’s permissions #2744

Changed

- Make

DiskOffering in CloudStackMachineConfig optional

Fixed

- Fix upgrade failure when flux is enabled #3091

#3093

- Add token-refresher to default images to fix import/download images commands

- Improve retry logic for transient issues with kubectl applies and helm pulls #3167

- Fix issue fetching curated packages images

Added

- Add

--insecure flag to import/download images commands #2878

Breaking Changes

- EKS Anywhere no longer distributes Ubuntu OVAs for use with EKS Anywhere clusters. Building your own Ubuntu-based nodes as described in Building node images

is the only supported way to get that functionality.

Added

- Add support for Kubernetes 1.23 #2159

- Add support for Support Bundle for validating control plane IP with vSphere provider

- Add support for aws-iam-authenticator on Bare Metal

- Curated Packages General Availability

- Added Emissary Ingress Curated Package

Changed

- Install and enable GitOps in the existing cluster with upgrade command

Changed

- Updated EKS Distro versions to latest release

Fixed

- Fixed control plane nodes not upgraded for same kube version #2636

Added

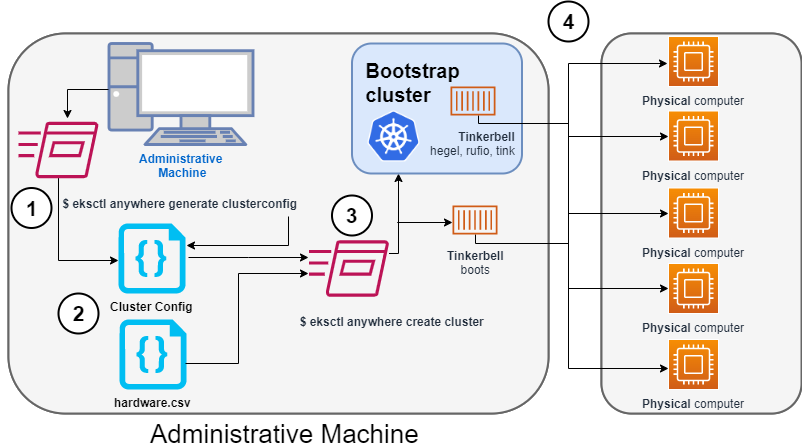

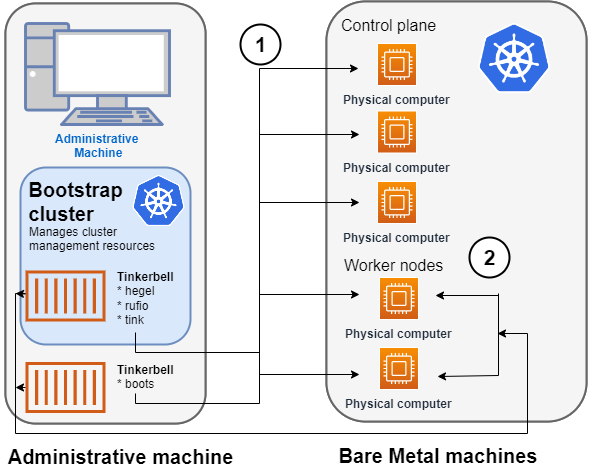

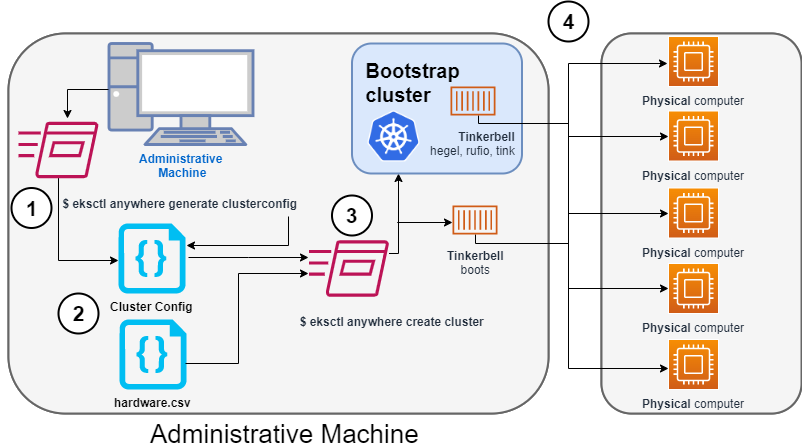

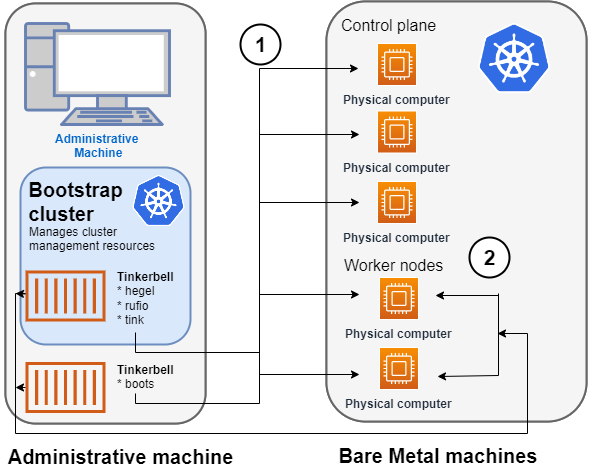

- Added support for EKS Anywhere on bare metal with provider tinkerbell

. EKS Anywhere on bare metal supports complete provisioning cycle, including power on/off and PXE boot for standing up a cluster with the given hardware data.

- Support for node CIDR mask config exposed via the cluster spec. #488

Changed

- Upgraded cilium from 1.9 to 1.10. #1124

- Changes for EKS Anywhere packages v0.10.0

Fixed

- Fix issue using self-signed certificates for registry mirror #1857

Fixed

- Fix issue by avoiding processing Snow images when URI is empty

Added

- Adding support to EKS Anywhere for a generic git provider as the source of truth for GitOps configuration management. #9

- Allow users to configure Cloud Provider and CSI Driver with different credentials. #1730

- Support to install, configure and maintain operational components that are secure and tested by Amazon on EKS Anywhere clusters.#2083

- A new Workshop section has been added to EKS Anywhere documentation.

- Added support for curated packages behind a feature flag #1893

Fixed

- Fix issue specifying proxy configuration for helm template command #2009

Fixed

- Fix issue with upgrading cluster from a previous minor version #1819

Fixed

- Fix issue with downloading artifacts #1753

Added

- SSH keys and Users are now mutable #1208

- OIDC configuration is now mutable #676

- Add support for Cilium’s policy enforcement mode #726

Changed

- Install Cilium networking through Helm instead of static manifest

v0.7.2

- 2022-02-28

Fixed

- Fix issue with downloading artifacts #1327

v0.7.1

- 2022-02-25

Added

- Support for taints in worker node group configurations #189

- Support for taints in control plane configurations #189

- Support for labels in worker node group configuration #486

- Allow removal of worker node groups using the

eksctl anywhere upgrade command #1054

v0.7.0

- 2022-01-27

Added

- Support for

aws-iam-authenticator

as an authentication option in EKS-A clusters #90

- Support for multiple worker node groups in EKS-A clusters #840

- Support for IAM Role for Service Account (IRSA) #601

- New command

upgrade plan cluster lists core component changes affected by upgrade cluster #499

- Support for workload cluster’s control plane and etcd upgrade through GitOps #1007

- Upgrading a Flux managed cluster previously required manual steps. These steps have now been automated.

#759

, #1019

- Cilium CNI will now be upgraded by the

upgrade cluster command #326

Changed

- EKS-A now uses Cluster API (CAPI) v1.0.1 and v1beta1 manifests, upgrading from v0.3.23 and v1alpha3 manifests.

- Kubernetes components and etcd now use TLS_ECDHE_RSA_WITH_AES_128_GCM_SHA256 as the

configured TLS cipher suite #657

,

#759

- Automated git repository structure changes during Flux component

upgrade workflow #577

v0.6.0 - 2021-10-29

Added

- Support to create and manage workload clusters #94

- Support for upgrading eks-anywhere components #93

, Cluster upgrades

- IMPORTANT: Currently upgrading existing flux managed clusters requires performing a few additional steps

. The fix for upgrading the existing clusters will be published in

0.6.1 release

to improve the upgrade experience.

- k8s CIS compliance #193

- Support bundle improvements #92

- Ability to upgrade control plane nodes before worker nodes #100

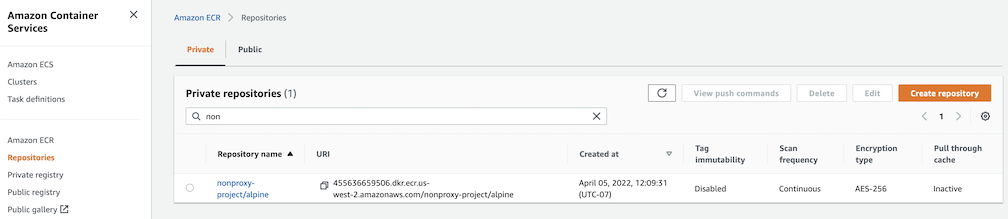

- Ability to use your own container registry #98

- Make namespace configurable for anywhere resources #177

Fixed

- Fix ova auto-import issue for multi-datacenter environments #437

- OVA import via EKS-A CLI sometimes fails #254

- Add proxy configuration to etcd nodes for bottlerocket #195

Removed

- overrideClusterSpecFile field in cluster config

v0.5.0

Added

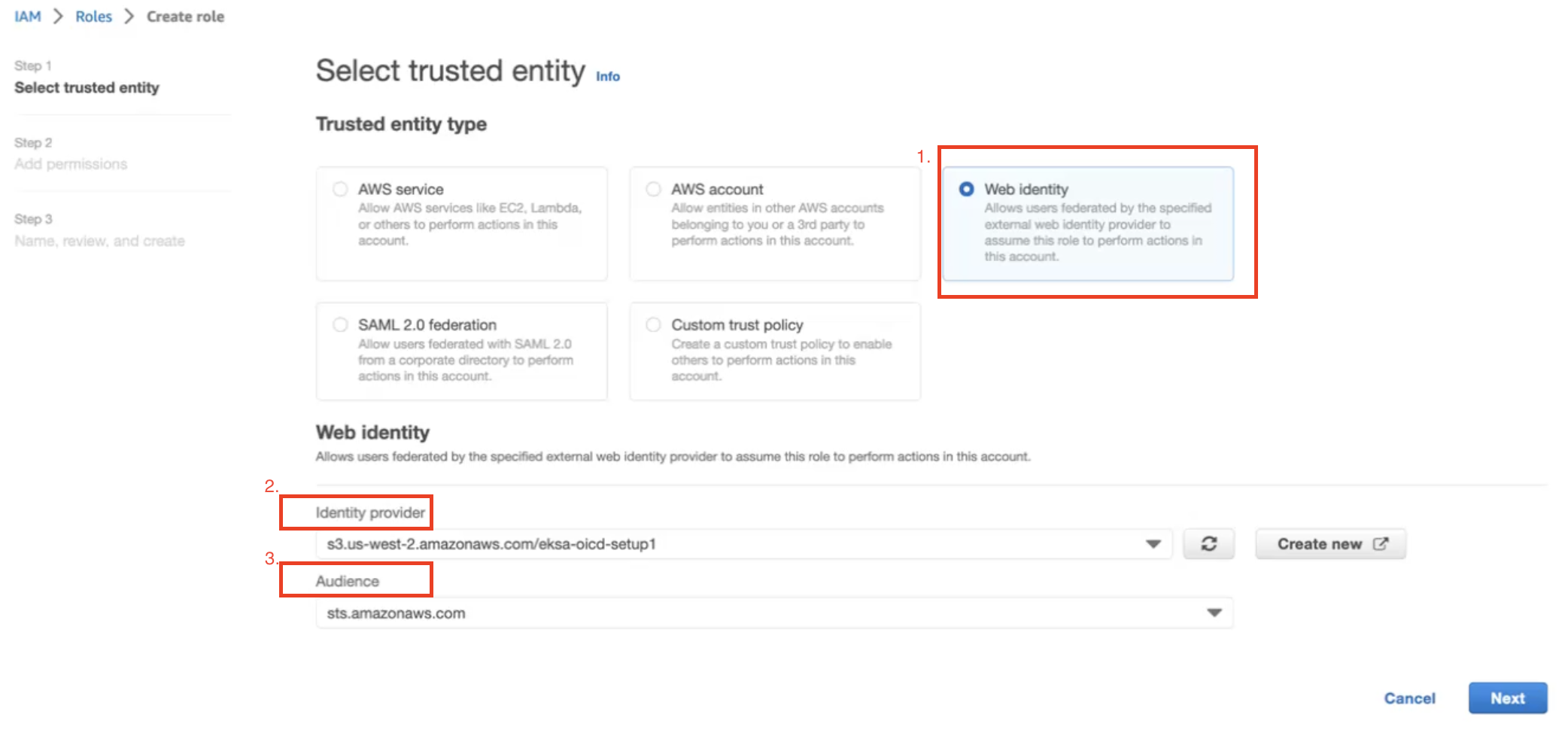

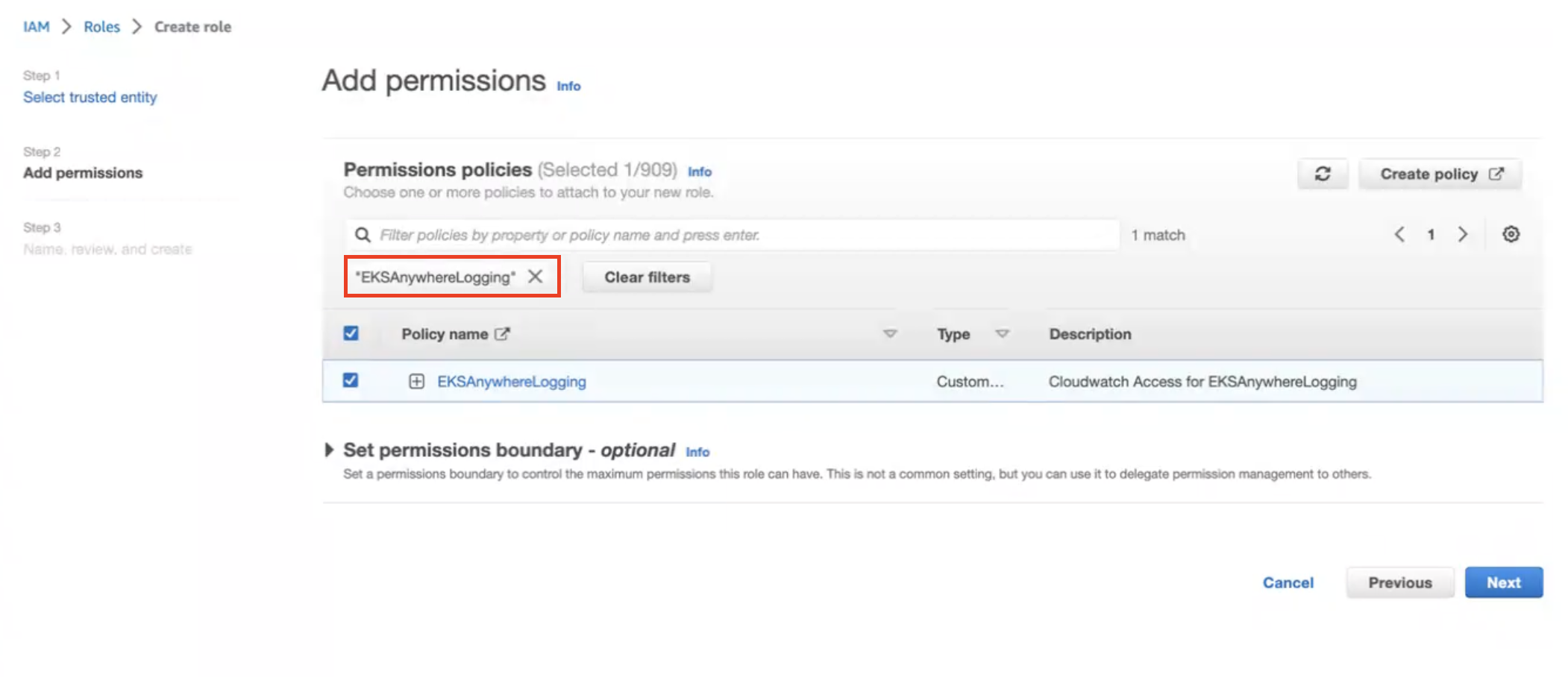

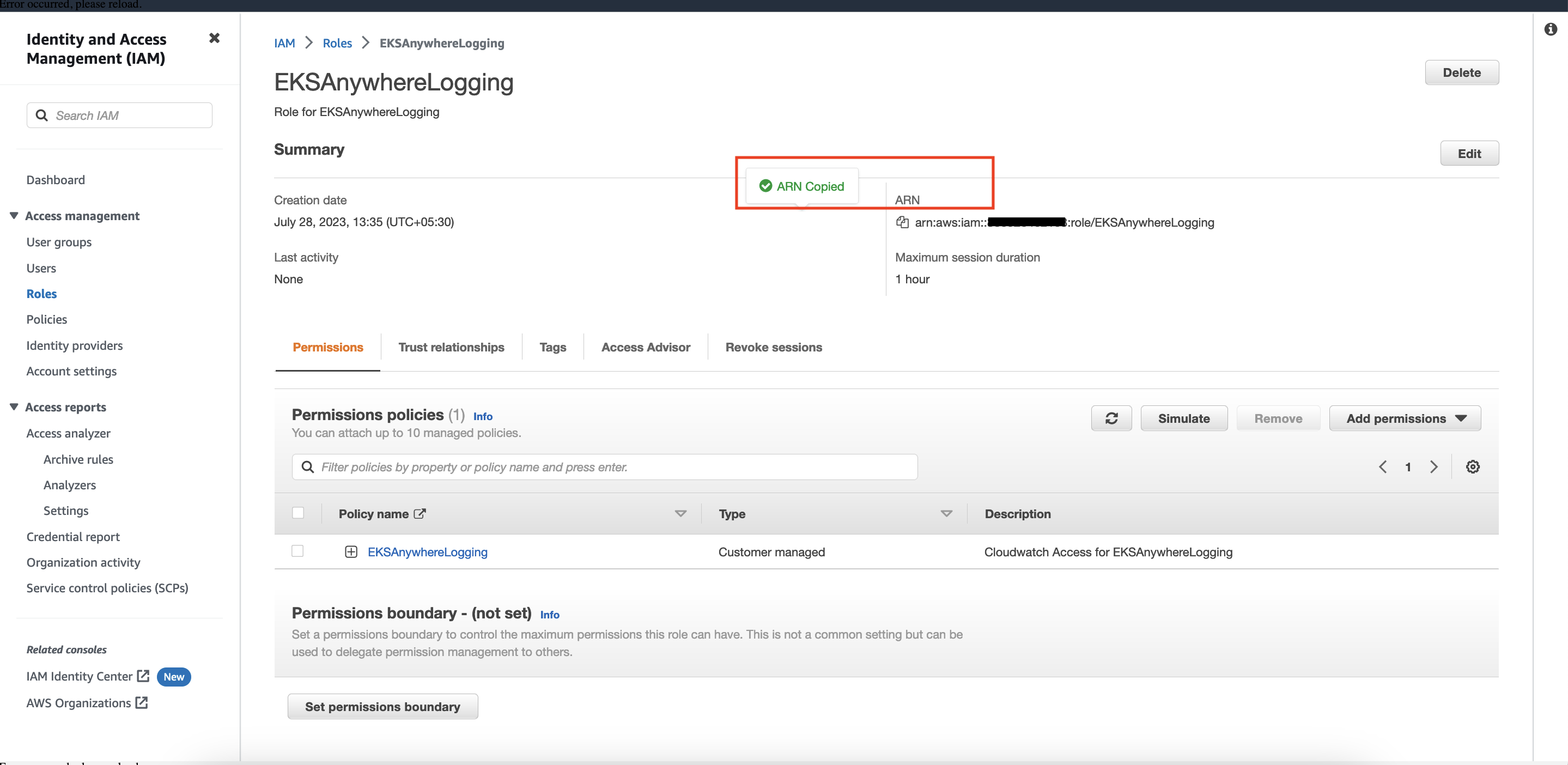

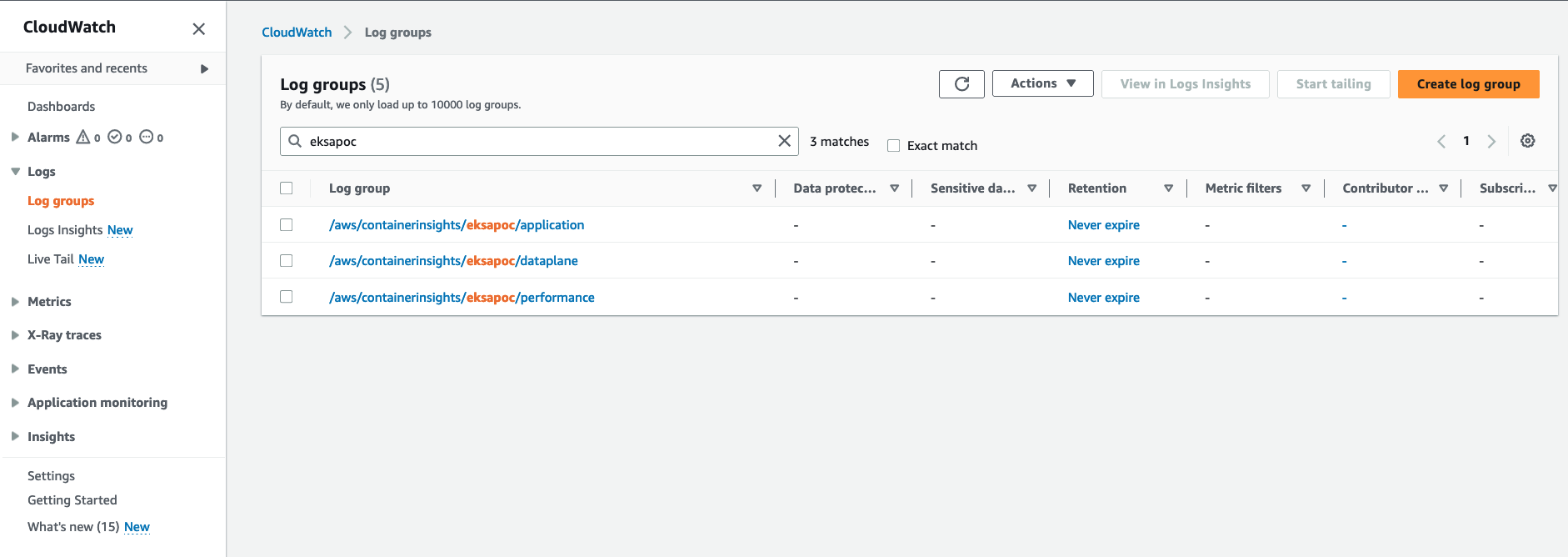

2.2 - Release Alerts

SNS Alerts for EKS Anywhere releases

EKS Anywhere uses Amazon Simple Notification Service (SNS) to notify availability of a new release.

It is recommended that your clusters are kept up to date with the latest EKS Anywhere release.

Please follow the instructions below to subscribe to SNS notification.

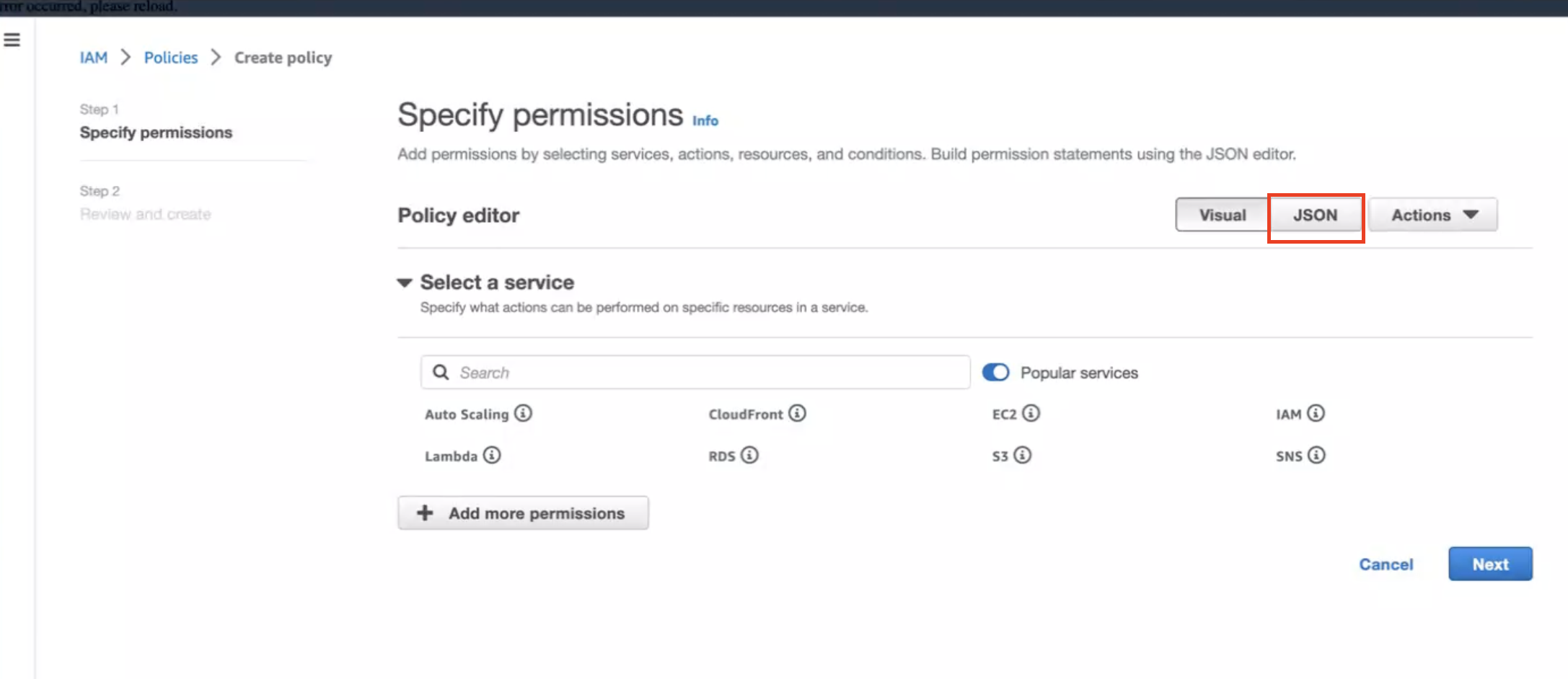

- Sign in to your AWS Account

- Select us-east-1 region

- Go to the SNS Console

- In the left navigation pane, choose “Subscriptions”

- On the Subscriptions page, choose “Create subscription”

- On the Create subscription page, in the Details section enter the following information

- Choose Create Subscription

- In few minutes, you will receive an email asking you to confirm the subscription

- Click the confirmation link in the email

3 - Concepts

The Concepts section contains an overview of the EKS Anywhere architecture, components, versioning, and support.

Most of the content in the EKS Anywhere documentation is specific to how EKS Anywhere deploys and manages Kubernetes clusters. For information on Kubernetes itself, reference the Kubernetes documentation.

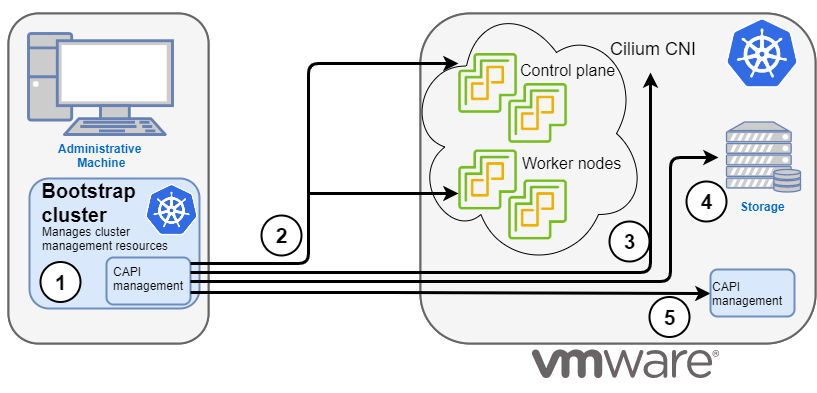

3.1 - EKS Anywhere Architecture

EKS Anywhere architecture overview

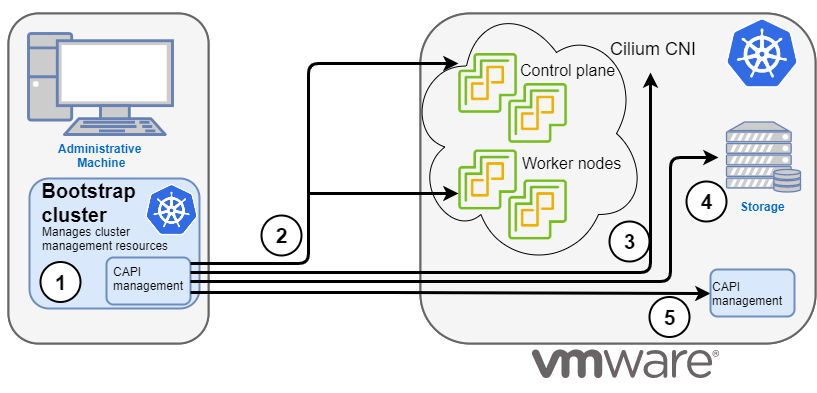

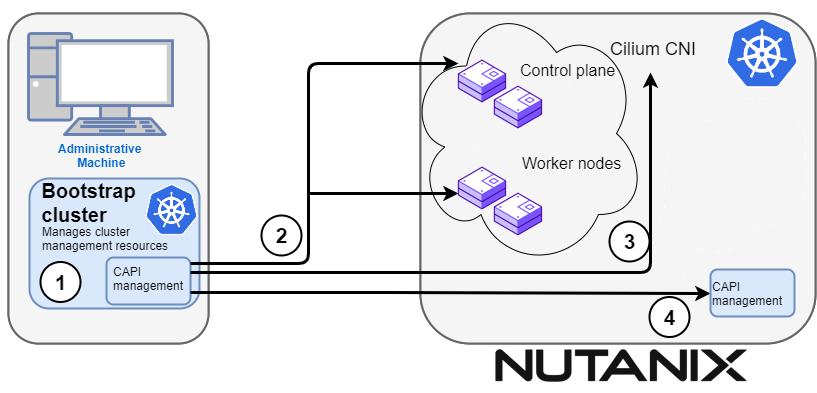

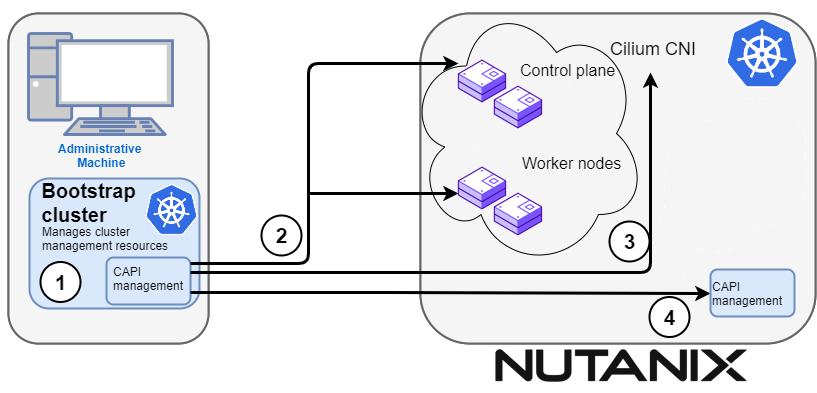

EKS Anywhere supports many different types of infrastructure including VMWare vSphere, bare metal, Nutanix, Apache CloudStack, and AWS Snow. EKS Anywhere is built on the Kubernetes sub-project called Cluster API

(CAPI), which is focused on providing declarative APIs and tooling to simplify the provisioning, upgrading, and operating of multiple Kubernetes clusters. EKS Anywhere inherits many of the same architectural patterns and concepts that exist in CAPI. Reference the CAPI documentation

to learn more about the core CAPI concepts.

Components

Each EKS Anywhere version includes all components required to create and manage EKS Anywhere clusters.

Administrative / CLI components

Responsible for lifecycle operations of management or standalone clusters, building images, and collecting support diagnostics. Admin / CLI components run on Admin machines or image building machines.

| Component |

Description |

| eksctl CLI |

Command-line tool to create, upgrade, and delete management, standalone, and optionally workload clusters. |

| image-builder |

Command-line tool to build Ubuntu and RHEL node images |

| diagnostics collector |

Command-line tool to produce support diagnostics bundle |

Management components

Responsible for infrastructure and cluster lifecycle management (create, update, upgrade, scale, delete). Management components run on standalone or management clusters.

| Component |

Description |

| CAPI controller |

Controller that manages core Cluster API objects such as Cluster, Machine, MachineHealthCheck etc. |

| EKS Anywhere lifecycle controller |

Controller that manages EKS Anywhere objects such as EKS Anywhere Clusters, EKS-A Releases, FluxConfig, GitOpsConfig, AwsIamConfig, OidcConfig |

| Curated Packages controller |

Controller that manages EKS Anywhere Curated Package objects |

| Kubeadm controller |

Controller that manages Kubernetes control plane objects |

| Etcdadm controller |

Controller that manages etcd objects |

| Provider-specific controllers |

Controller that interacts with infrastructure provider (vSphere, bare metal etc.) and manages the infrastructure objects |

| EKS Anywhere CRDs |

Custom Resource Definitions that EKS Anywhere uses to define and control infrastructure, machines, clusters, and other objects |

Cluster components

Components that make up a Kubernetes cluster where applications run. Cluster components run on standalone, management, and workload clusters.

| Component |

Description |

| Kubernetes |

Kubernetes components that include kube-apiserver, kube-controller-manager, kube-scheduler, kubelet, kubectl |

| etcd |

Etcd database used for Kubernetes control plane datastore |

| Cilium |

Container Networking Interface (CNI) |

| CoreDNS |

In-cluster DNS |

| kube-proxy |

Network proxy that runs on each node |

| containerd |

Container runtime |

| kube-vip |

Load balancer that runs on control plane to balance control plane IPs |

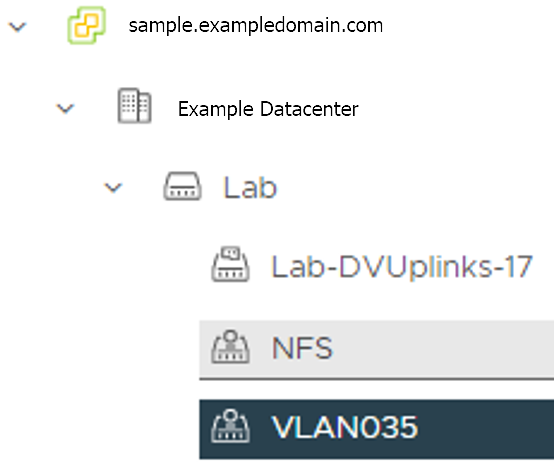

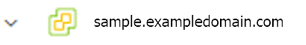

Deployment Architectures

EKS Anywhere supports two deployment architectures:

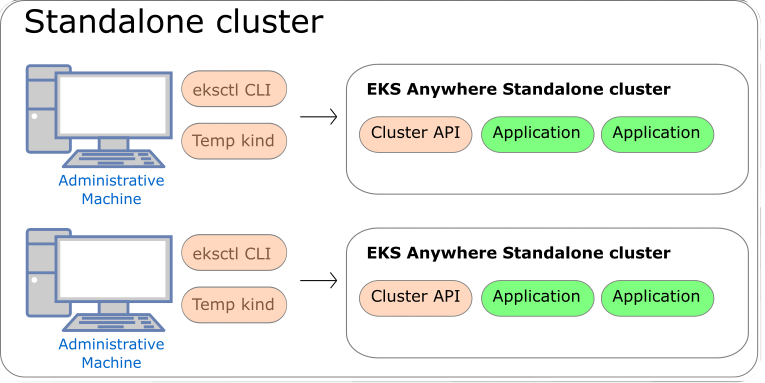

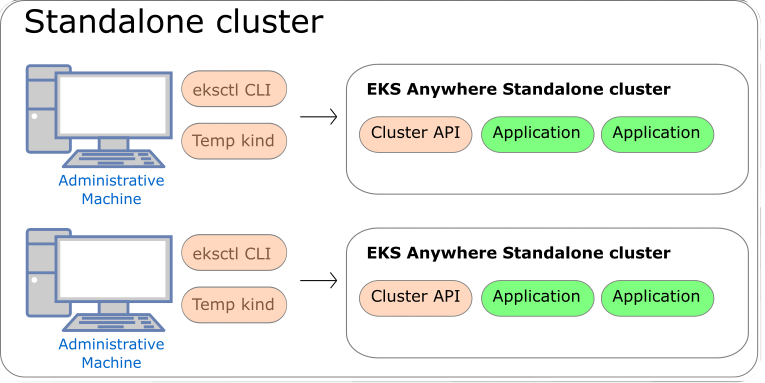

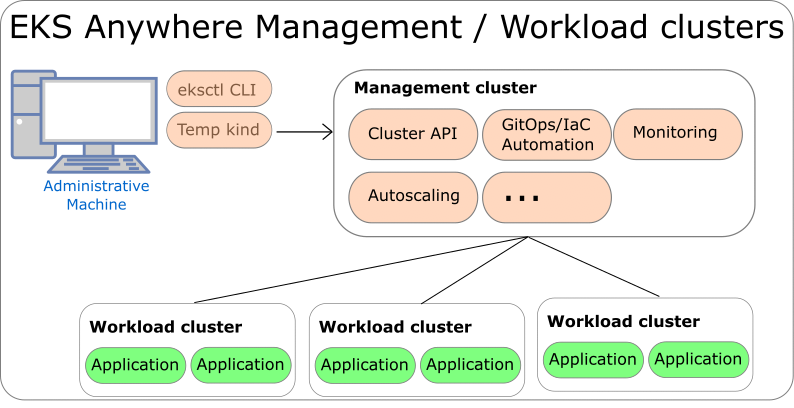

-

Standalone clusters: If you are only running a single EKS Anywhere cluster, you can deploy a standalone cluster. This deployment type runs the EKS Anywhere management components on the same cluster that runs workloads. Standalone clusters must be managed with the eksctl CLI. A standalone cluster is effectively a management cluster, but in this deployment type, only manages itself.

-

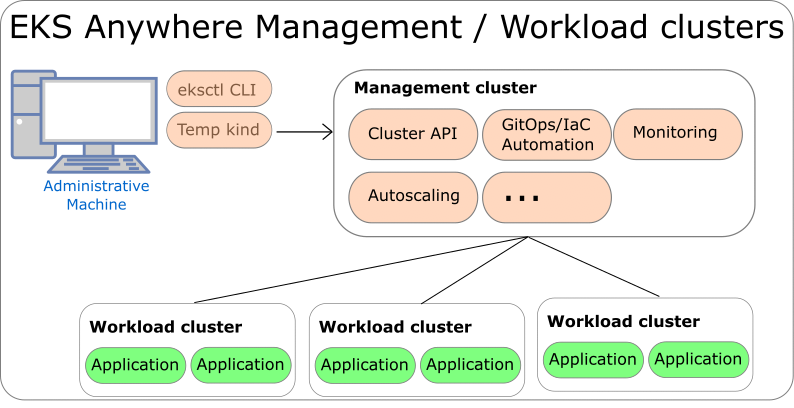

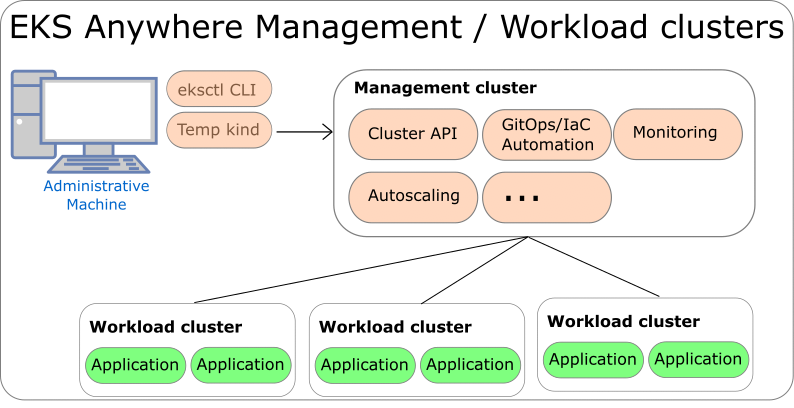

Management cluster with separate workload clusters: If you plan to deploy multiple EKS Anywhere clusters, it’s recommended to deploy a management cluster with separate workload clusters. With this deployment type, the EKS Anywhere management components are only run on the management cluster, and the management cluster can be used to perform cluster lifecycle operations on a fleet of workload clusters. The management cluster must be managed with the eksctl CLI, whereas workload clusters can be managed with the eksctl CLI or with Kubernetes API-compatible clients such as kubectl, GitOps, or Terraform.

If you use the management cluster architecture, the management cluster must run on the same infrastructure provider as your workload clusters. For example, if you run your management cluster on vSphere, your workload clusters must also run on vSphere. If you run your management cluster on bare metal, your workload cluster must run on bare metal. Similarly, all nodes in workload clusters must run on the same infrastructure provider. You cannot have control plane nodes on vSphere, and worker nodes on bare metal.

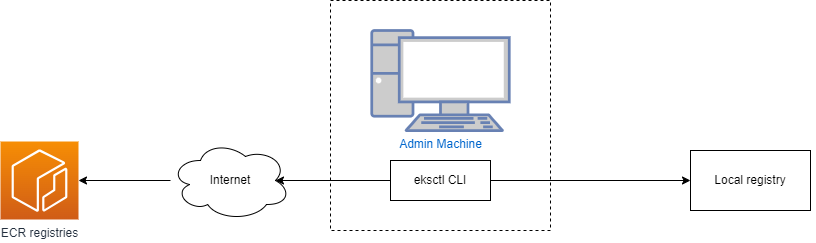

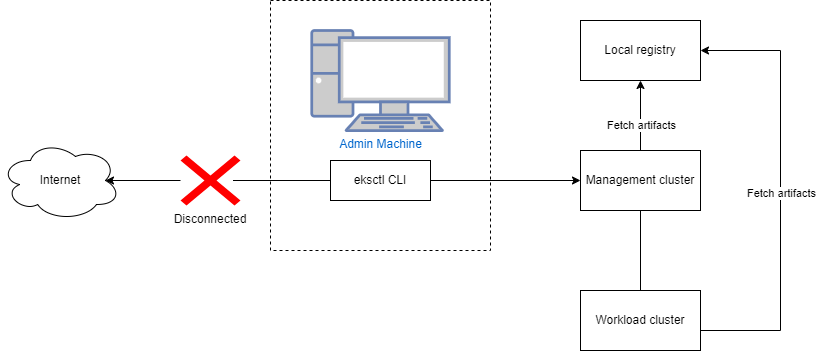

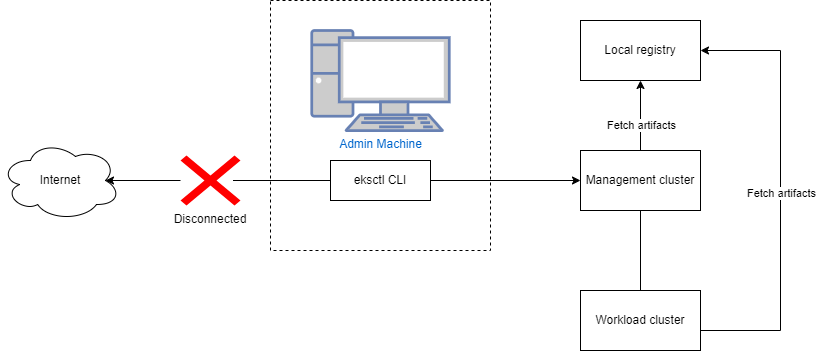

Both deployment architectures can run entirely disconnected from the internet and AWS Cloud. For information on deploying EKS Anywhere in airgapped environments, reference the Airgapped Installation page.

Standalone Clusters

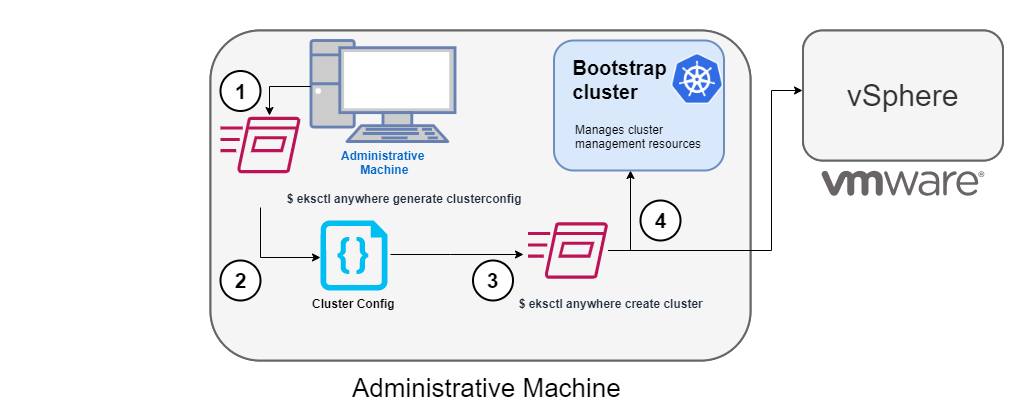

Technically, standalone clusters are the same as management clusters, with the only difference being that standalone clusters are only capable of managing themselves. Regardless of the deployment architecture you choose, you always start by creating a standalone cluster from an Admin machine.

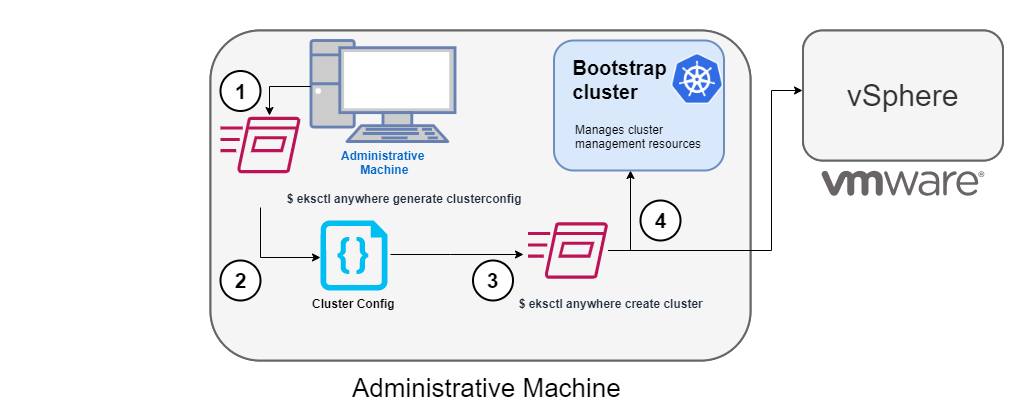

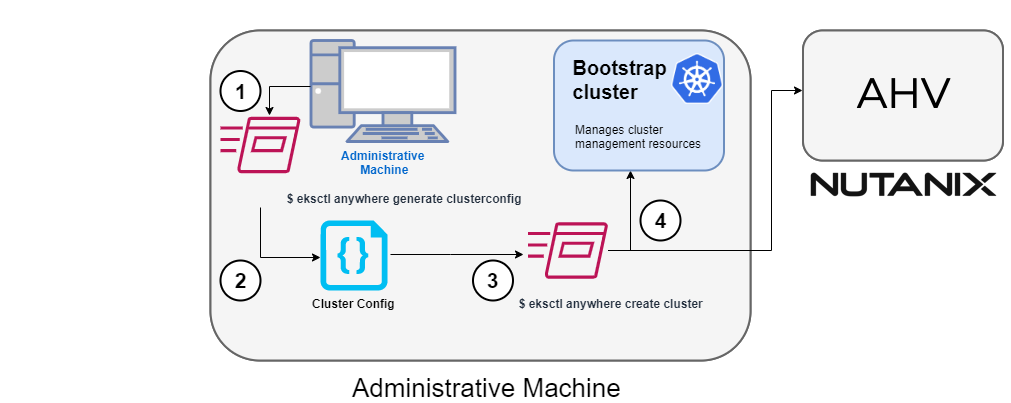

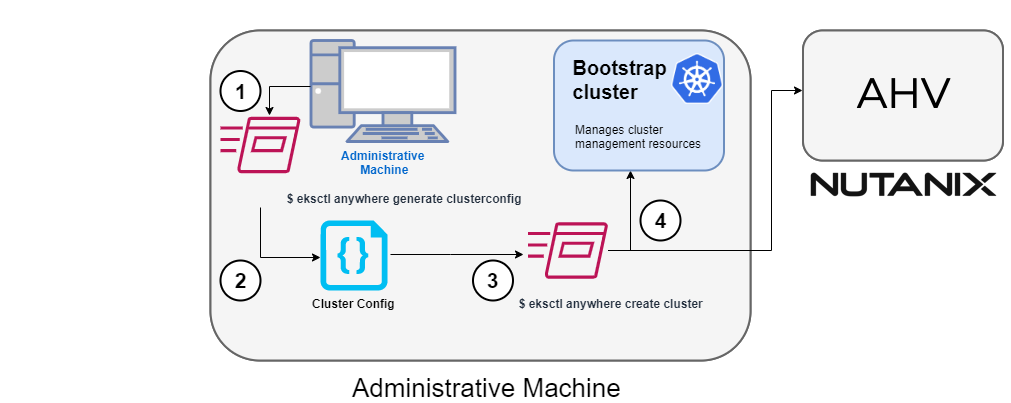

When you first create a standalone cluster, a temporary Kind bootstrap cluster is used on your Admin machine to pull down the required components and bootstrap your standalone cluster on the infrastructure of your choice.

Management Clusters

Management clusters are long-lived EKS Anywhere clusters that can create and manage a fleet of EKS Anywhere workload clusters. Management clusters run both management and cluster components. Workload clusters run cluster components only and are where your applications run. Management clusters enable you to centrally manage your workload clusters with Kubernetes API-compatible clients such as kubectl, GitOps, or Terraform, and prevent management components from interfering with the resource usage of your applications running on workload clusters.

3.2 - Versioning

EKS Anywhere and Kubernetes version support policy and release cycle

This page contains information on the EKS Anywhere release cycle and support for Kubernetes versions.

When creating new clusters, we recommend that you use the latest available Kubernetes version supported by EKS Anywhere. If your application requires a specific version of Kubernetes, you can select older versions. You can create new EKS Anywhere clusters on any Kubernetes version that the EKS Anywhere version supports.

You must have an EKS Anywhere Enterprise Subscription

to receive support for EKS Anywhere from AWS.

Kubernetes versions

Each EKS Anywhere version includes support for multiple Kubernetes minor versions.

The release and support schedule for Kubernetes versions in EKS Anywhere aligns with the Amazon EKS standard support schedule as documented on the Amazon EKS Kubernetes release calendar.

A minor Kubernetes version is under standard support in EKS Anywhere for 14 months after it’s released in EKS Anywhere. EKS Anywhere currently does not offer extended version support for Kubernetes versions. If you are interested in extended version support for Kubernetes versions in EKS Anywhere, please upvote or comment on EKS Anywhere GitHub Issue #6793.

Patch releases for Kubernetes versions are included in EKS Anywhere as they become available in EKS Distro.

Unlike Amazon EKS, there are no automatic upgrades in EKS Anywhere and you have full control over when you upgrade. On the end of support date, you can still create new EKS Anywhere clusters with the unsupported Kubernetes version if the EKS Anywhere version you are using includes it. Any existing EKS Anywhere clusters with the unsupported Kubernetes version continue to function. As new Kubernetes versions become available in EKS Anywhere, we recommend that you proactively update your clusters to use the latest available Kubernetes version to remain on versions that receive CVE patches and bug fixes.

Reference the table below for release and support dates for each Kubernetes version in EKS Anywhere. The Release Date column denotes the EKS Anywhere release date when the Kubernetes version was first supported in EKS Anywhere. Note, dates with only a month and a year are approximate and are updated with an exact date when it’s known.

| Kubernetes Version |

Release Date |

Support End |

| 1.29 |

February 2, 2024 |

March, 2025 |

| 1.28 |

October 10, 2023 |

December, 2024 |

| 1.27 |

June 6, 2023 |

August, 2024 |

| 1.26 |

March 3, 2023 |

June, 2024 |

| 1.25 |

January 1, 2023 |

May, 2024 |

| 1.24 |

October 10, 2022 |

February 2, 2024 |

| 1.23 |

August 8, 2022 |

October 10, 2023 |

| 1.22 |

March 3, 2022 |

June 6, 2023 |

- Older Kubernetes versions are omitted from this table for brevity, reference the EKS Anywhere GitHub

for older versions.

EKS Anywhere versions

Each EKS Anywhere version includes all components required to create and manage EKS Anywhere clusters. This includes but is not limited to:

- Administrative / CLI components (eksctl CLI, image-builder, diagnostics-collector)

- Management components (Cluster API controller, EKS Anywhere controller, provider-specific controllers)

- Cluster components (Kubernetes, Cilium)